一、本文介绍

本文给大家带来的改进机制是利用多层次 特征融合 模块 (SDI) 配上经典的加权 双向特征金字塔网络 ASF-YOLO的Neck 形成一种全新的Neck网络结构,从而达到二次创新的效果,其中 (SDI) 模块的主要思想是通过整合编码器生成的层级特征图来增强图像中的语义信息和细节信息。 ASF-YOLO (发布于2023.12月份的最新机制) , 其是特别设计用于细胞实例分割。这个 模型 通过结合空间和尺度特征,提高了在处理细胞图像时的准确性和速度。在实验中, ASF-YOLO 在2018年数据科学竞赛数据集上取得了卓越的分割准确性和速度,达到了0.91的box mAP(平均精度),0.887的mask mAP,以及47.3 FPS的推理速度,效果非常的好。同时这种融合我们在书写论文的时候论文的结构图也比较好画,同时本文的 SDI 模块在分割领域涨点高效, 融合起来非常适用于目标分割。

欢迎大家订阅我的专栏一起学习YOLO!

二、原理介绍

大家想要看原理介绍可以看我单独的博客介绍。

ASF-YOLO: ASFYOLO的原理介绍点击此处即可跳转

SDI: SDI的原理介绍点击此处即可跳转

三、ASF-YOLO的核心代码

使用方式看章节四。

- import torch

- import torch.nn as nn

- import torch.nn.functional as F

- import math

- def autopad(k, p=None, d=1): # kernel, padding, dilation

- # Pad to 'same' shape outputs

- if d > 1:

- k = d * (k - 1) + 1 if isinstance(k, int) else [d * (x - 1) + 1 for x in k] # actual kernel-size

- if p is None:

- p = k // 2 if isinstance(k, int) else [x // 2 for x in k] # auto-pad

- return p

- class Conv(nn.Module):

- # Standard convolution with args(ch_in, ch_out, kernel, stride, padding, groups, dilation, activation)

- default_act = nn.SiLU() # default activation

- def __init__(self, c1, c2, k=1, s=1, p=None, g=1, d=1, act=True):

- super().__init__()

- self.conv = nn.Conv2d(c1, c2, k, s, autopad(k, p, d), groups=g, dilation=d, bias=False)

- self.bn = nn.BatchNorm2d(c2)

- self.act = self.default_act if act is True else act if isinstance(act, nn.Module) else nn.Identity()

- def forward(self, x):

- return self.act(self.bn(self.conv(x)))

- def forward_fuse(self, x):

- return self.act(self.conv(x))

- class Zoom_cat(nn.Module):

- def __init__(self):

- super().__init__()

- # self.conv_l_post_down = Conv(in_dim, 2*in_dim, 3, 1, 1)

- def forward(self, x):

- """l,m,s表示大中小三个尺度,最终会被整合到m这个尺度上"""

- l, m, s = x[0], x[1], x[2]

- tgt_size = m.shape[2:]

- l = F.adaptive_max_pool2d(l, tgt_size) + F.adaptive_avg_pool2d(l, tgt_size)

- # l = self.conv_l_post_down(l)

- # m = self.conv_m(m)

- # s = self.conv_s_pre_up(s)

- s = F.interpolate(s, m.shape[2:], mode='nearest')

- # s = self.conv_s_post_up(s)

- lms = torch.cat([l, m, s], dim=1)

- return lms

- class ScalSeq(nn.Module):

- def __init__(self, inc, channel):

- super(ScalSeq, self).__init__()

- self.conv0 = Conv(inc[0], channel, 1)

- self.conv1 = Conv(inc[1], channel, 1)

- self.conv2 = Conv(inc[2], channel, 1)

- self.conv3d = nn.Conv3d(channel, channel, kernel_size=(1, 1, 1))

- self.bn = nn.BatchNorm3d(channel)

- self.act = nn.LeakyReLU(0.1)

- self.pool_3d = nn.MaxPool3d(kernel_size=(3,1,1))

- def forward(self, x):

- p3, p4, p5 = x[0], x[1], x[2]

- p3 = self.conv0(p3)

- p4_2 = self.conv1(p4)

- p4_2 = F.interpolate(p4_2, p3.size()[2:], mode='nearest')

- p5_2 = self.conv2(p5)

- p5_2 = F.interpolate(p5_2, p3.size()[2:], mode='nearest')

- p3_3d = torch.unsqueeze(p3, -3)

- p4_3d = torch.unsqueeze(p4_2, -3)

- p5_3d = torch.unsqueeze(p5_2, -3)

- combine = torch.cat([p3_3d, p4_3d, p5_3d],dim = 2)

- conv_3d = self.conv3d(combine)

- bn = self.bn(conv_3d)

- act = self.act(bn)

- x = self.pool_3d(act)

- x = torch.squeeze(x, 2)

- return x

- class Add(nn.Module):

- # Concatenate a list of tensors along dimension

- def __init__(self, ch=256):

- super().__init__()

- def forward(self, x):

- input1, input2 = x[0], x[1]

- x = input1 + input2

- return x

- class channel_att(nn.Module):

- def __init__(self, channel, b=1, gamma=2):

- super(channel_att, self).__init__()

- kernel_size = int(abs((math.log(channel, 2) + b) / gamma))

- kernel_size = kernel_size if kernel_size % 2 else kernel_size + 1

- self.avg_pool = nn.AdaptiveAvgPool2d(1)

- self.conv = nn.Conv1d(1, 1, kernel_size=kernel_size, padding=(kernel_size - 1) // 2, bias=False)

- self.sigmoid = nn.Sigmoid()

- def forward(self, x):

- y = self.avg_pool(x)

- y = y.squeeze(-1)

- y = y.transpose(-1, -2)

- y = self.conv(y).transpose(-1, -2).unsqueeze(-1)

- y = self.sigmoid(y)

- return x * y.expand_as(x)

- class local_att(nn.Module):

- def __init__(self, channel, reduction=16):

- super(local_att, self).__init__()

- self.conv_1x1 = nn.Conv2d(in_channels=channel, out_channels=channel // reduction, kernel_size=1, stride=1,

- bias=False)

- self.relu = nn.ReLU()

- self.bn = nn.BatchNorm2d(channel // reduction)

- self.F_h = nn.Conv2d(in_channels=channel // reduction, out_channels=channel, kernel_size=1, stride=1,

- bias=False)

- self.F_w = nn.Conv2d(in_channels=channel // reduction, out_channels=channel, kernel_size=1, stride=1,

- bias=False)

- self.sigmoid_h = nn.Sigmoid()

- self.sigmoid_w = nn.Sigmoid()

- def forward(self, x):

- _, _, h, w = x.size()

- x_h = torch.mean(x, dim=3, keepdim=True).permute(0, 1, 3, 2)

- x_w = torch.mean(x, dim=2, keepdim=True)

- x_cat_conv_relu = self.relu(self.bn(self.conv_1x1(torch.cat((x_h, x_w), 3))))

- x_cat_conv_split_h, x_cat_conv_split_w = x_cat_conv_relu.split([h, w], 3)

- s_h = self.sigmoid_h(self.F_h(x_cat_conv_split_h.permute(0, 1, 3, 2)))

- s_w = self.sigmoid_w(self.F_w(x_cat_conv_split_w))

- out = x * s_h.expand_as(x) * s_w.expand_as(x)

- return out

- class attention_model(nn.Module):

- # Concatenate a list of tensors along dimension

- def __init__(self, ch=256):

- super().__init__()

- self.channel_att = channel_att(ch)

- self.local_att = local_att(ch)

- def forward(self, x):

- input1, input2 = x[0], x[1]

- input1 = self.channel_att(input1)

- x = input1 + input2

- x = self.local_att(x)

- return x

四、手把手教你添加ASF-YOLO

4.1 修改一

第一还是建立文件,我们找到如下 ultralytics /nn文件夹下建立一个目录名字呢就是'Addmodules'文件夹( 用群内的文件的话已经有了无需新建) !然后在其内部建立一个新的py文件将核心代码复制粘贴进去即可。

4.2 修改二

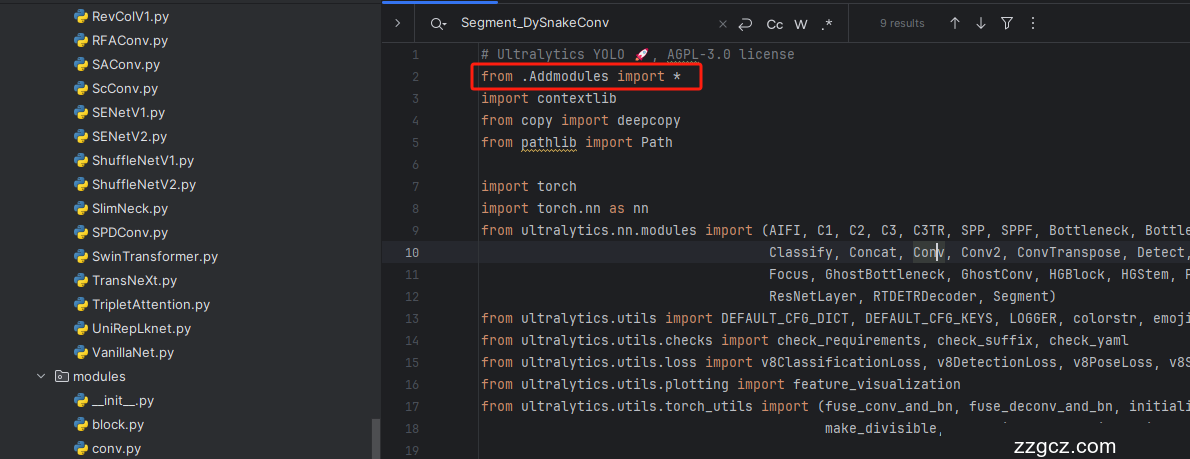

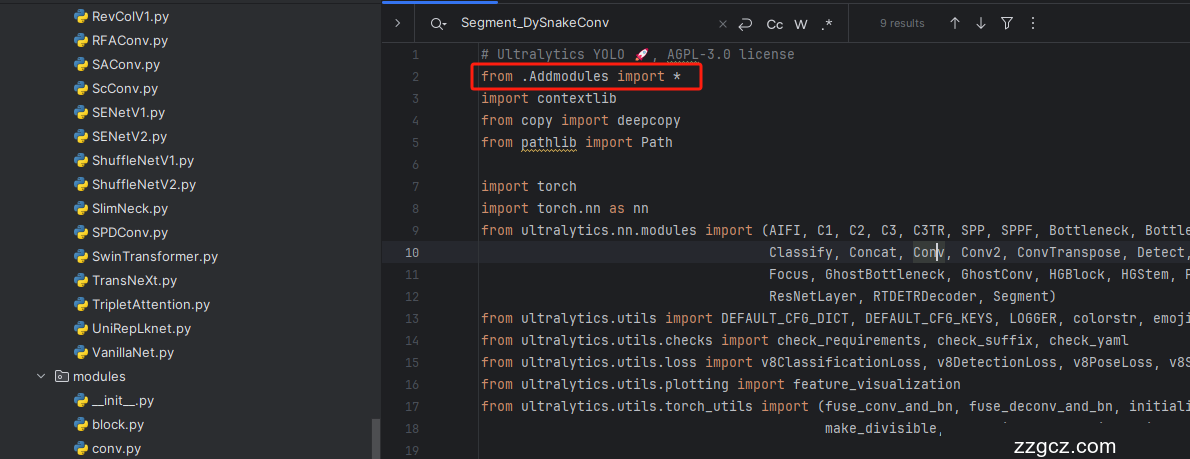

第二步我们在该目录下创建一个新的py文件名字为'__init__.py'( 用群内的文件的话已经有了无需新建) ,然后在其内部导入我们的检测头如下图所示。

4.3 修改三

第三步我门中到如下文件'ultralytics/nn/tasks.py'进行导入和注册我们的模块( 用群内的文件的话已经有了无需重新导入直接开始第四步即可) !

4.4 修改四

按照我的添加在parse_model里添加即可。

- # ------------------------------ASF-YOLO--------------------------------

- elif m is Zoom_cat:

- c2 = sum(ch[x] for x in f)

- elif m is Add:

- c2 = ch[f[-1]]

- elif m is ScalSeq:

- c1 = [ch[x] for x in f]

- c2 = make_divisible(args[0] * width, 8)

- args = [c1, c2]

- elif m is attention_model:

- args = [ch[f[-1]]]

- # ------------------------------ASF-YOLO--------------------------------

五、 SDI 的核心代码

代码的使用方式看章节七!

- import torch

- import torch.nn as nn

- import torch.nn.functional as F

- __all__ = ['SDI']

- class SDI(nn.Module):

- def __init__(self, channel):

- super().__init__()

- self.convs = nn.ModuleList(

- [nn.Conv2d(c, channel[0], kernel_size=3, stride=1, padding=1) for c in channel])

- def forward(self, xs):

- ans = torch.ones_like(xs[0])

- target_size = xs[0].shape[-2:]

- for i, x in enumerate(xs):

- if x.shape[-1] > target_size[0]:

- x = F.adaptive_avg_pool2d(x, (target_size[0], target_size[1]))

- elif x.shape[-1] < target_size[0]:

- x = F.interpolate(x, size=(target_size[0], target_size[1]),

- mode='bilinear', align_corners=True)

- ans = ans * self.convs[i](x)

- return ans

六、手把手教你添加SDI机制

6.1 修改一

第一还是建立文件,我们找到如下ultralytics/nn文件夹下建立一个目录名字呢就是'Addmodules'文件夹( 用群内的文件的话已经有了无需新建) !然后在其内部建立一个新的py文件将核心代码复制粘贴进去即可。

6.2 修改二

第二步我们在该目录下创建一个新的py文件名字为'__init__.py'( 用群内的文件的话已经有了无需新建) ,然后在其内部导入我们的检测头如下图所示。

6.3 修改三

第三步我门中到如下文件'ultralytics/nn/tasks.py'进行导入和注册我们的模块( 用群内的文件的话已经有了无需重新导入直接开始第四步即可) !

从今天开始以后的教程就都统一成这个样子了,因为我默认大家用了我群内的文件来进行修改!!

6.4 修改四

按照我的添加在parse_model里添加即可。

- elif m is SDI:

- args = [[ch[x] for x in f]]

到此就修改完成了,大家可以复制下面的yaml文件运行。

七、融合后的yaml文件

大家复制下面的yaml文件通过自己习惯的方式运行即可,具体如何运行这里就不介绍了,不会的可以看群内的视频或者我的运行教程即可。

7.1、yaml文件版本一

此版本训练信息: YOLO11-ASFYOLO-SDI-1 summary: 397 layers, 3,293,634 parameters, 3,293,618 gradients, 8.2 GFLOPs

- # Ultralytics YOLO 🚀, AGPL-3.0 license

- # YOLO11 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect

- # Parameters

- nc: 80 # number of classes

- scales: # model compound scaling constants, i.e. 'model=yolo11n.yaml' will call yolo11.yaml with scale 'n'

- # [depth, width, max_channels]

- n: [0.50, 0.25, 1024] # summary: 319 layers, 2624080 parameters, 2624064 gradients, 6.6 GFLOPs

- s: [0.50, 0.50, 1024] # summary: 319 layers, 9458752 parameters, 9458736 gradients, 21.7 GFLOPs

- m: [0.50, 1.00, 512] # summary: 409 layers, 20114688 parameters, 20114672 gradients, 68.5 GFLOPs

- l: [1.00, 1.00, 512] # summary: 631 layers, 25372160 parameters, 25372144 gradients, 87.6 GFLOPs

- x: [1.00, 1.50, 512] # summary: 631 layers, 56966176 parameters, 56966160 gradients, 196.0 GFLOPs

- # YOLO11n backbone

- backbone:

- # [from, repeats, module, args]

- - [-1, 1, Conv, [64, 3, 2]] # 0-P1/2

- - [-1, 1, Conv, [128, 3, 2]] # 1-P2/4

- - [-1, 2, C3k2, [256, False, 0.25]]

- - [-1, 1, Conv, [256, 3, 2]] # 3-P3/8

- - [-1, 2, C3k2, [512, False, 0.25]]

- - [-1, 1, Conv, [512, 3, 2]] # 5-P4/16

- - [-1, 2, C3k2, [512, True]]

- - [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32

- - [-1, 2, C3k2, [1024, True]]

- - [-1, 1, SPPF, [1024, 5]] # 9

- - [-1, 2, C2PSA, [1024]] # 10

- # YOLO11n head

- head:

- - [-1, 1, Conv, [512, 1, 1]] # 11

- - [4, 1, Conv, [512, 1, 1]] # 12

- - [[-1, 6, -2], 1, Zoom_cat, []] # 13 cat backbone P4

- - [-1, 3, C3k2, [512, False]] # 14

- - [-1, 1, Conv, [256, 1, 1]] # 15

- - [2, 1, Conv, [256, 1, 1]] # 16

- - [[-1, 4, -2], 1, Zoom_cat, []] # 17 cat backbone P3

- - [-1, 3, C3k2, [256, False]] # 18 (P3/8-small)

- - [-1, 1, Conv, [256, 3, 2]] # 19

- - [[-1, 15], 1, SDI, []] # 20 cat head P4

- - [-1, 3, C3k2, [512, False]] # 21(P4/16-medium)

- - [-1, 1, Conv, [512, 3, 2]] # 22

- - [[-1, 11], 1, SDI, []] # 23 cat head P5

- - [-1, 3, C3k2, [1024, True]] # 24 (P5/32-large)

- - [[4, 6, 8], 1, ScalSeq, [256]] # 25 args[inchane]

- - [[18, -1], 1, Add, [64]] # 26

- - [[26, 21, 24], 1, Detect, [nc]] # RTDETRDecoder(P3, P4, P5)

7.2、yaml文件版本二

此版本训练信息:YOLO11-ASFYOLO-SDI-2 summary: 409 layers, 3,294,413 parameters, 3,294,397 gradients, 8.2 GFLOPs

- # Ultralytics YOLO 🚀, AGPL-3.0 license

- # YOLO11 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect

- # Parameters

- nc: 80 # number of classes

- scales: # model compound scaling constants, i.e. 'model=yolo11n.yaml' will call yolo11.yaml with scale 'n'

- # [depth, width, max_channels]

- n: [0.50, 0.25, 1024] # summary: 319 layers, 2624080 parameters, 2624064 gradients, 6.6 GFLOPs

- s: [0.50, 0.50, 1024] # summary: 319 layers, 9458752 parameters, 9458736 gradients, 21.7 GFLOPs

- m: [0.50, 1.00, 512] # summary: 409 layers, 20114688 parameters, 20114672 gradients, 68.5 GFLOPs

- l: [1.00, 1.00, 512] # summary: 631 layers, 25372160 parameters, 25372144 gradients, 87.6 GFLOPs

- x: [1.00, 1.50, 512] # summary: 631 layers, 56966176 parameters, 56966160 gradients, 196.0 GFLOPs

- # YOLO11n backbone

- backbone:

- # [from, repeats, module, args]

- - [-1, 1, Conv, [64, 3, 2]] # 0-P1/2

- - [-1, 1, Conv, [128, 3, 2]] # 1-P2/4

- - [-1, 2, C3k2, [256, False, 0.25]]

- - [-1, 1, Conv, [256, 3, 2]] # 3-P3/8

- - [-1, 2, C3k2, [512, False, 0.25]]

- - [-1, 1, Conv, [512, 3, 2]] # 5-P4/16

- - [-1, 2, C3k2, [512, True]]

- - [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32

- - [-1, 2, C3k2, [1024, True]]

- - [-1, 1, SPPF, [1024, 5]] # 9

- - [-1, 2, C2PSA, [1024]] # 10

- # YOLO11n head

- head:

- - [-1, 1, Conv, [512, 1, 1]] # 11

- - [4, 1, Conv, [512, 1, 1]] # 12

- - [[-1, 6, -2], 1, Zoom_cat, []] # 13 cat backbone P4

- - [-1, 3, C3k2, [512, False]] # 14

- - [-1, 1, Conv, [256, 1, 1]] # 15

- - [2, 1, Conv, [256, 1, 1]] # 16

- - [[-1, 4, -2], 1, Zoom_cat, []] # 17 cat backbone P3

- - [-1, 3, C3k2, [256, False]] # 18 (P3/8-small)

- - [-1, 1, Conv, [256, 3, 2]] # 19

- - [[-1, 15], 1, SDI, []] # 20 cat head P4

- - [-1, 3, C3k2, [512, False]] # 21(P4/16-medium)

- - [-1, 1, Conv, [512, 3, 2]] # 22

- - [[-1, 11], 1, SDI, []] # 23 cat head P5

- - [-1, 3, C3k2, [1024, True]] # 24 (P5/32-large)

- - [[4, 6, 8], 1, ScalSeq, [256]] # 25 args[inchane]

- - [[18, -1], 1, attention_model, [256]] # 26

- - [[26, 21, 24], 1, Detect, [nc]] # RTDETRDecoder(P3, P4, P5)

八、运行截图

九、全文总结

到此本文的正式分享内容就结束了,在这里给大家推荐我的YOLOv11改进有效涨点专栏,本专栏目前为新开的平均质量分98分,后期我会根据各种最新的前沿顶会进行论文复现,也会对一些老的改进机制进行补充,目前本专栏免费阅读(暂时,大家尽早关注不迷路~),如果大家觉得本文帮助到你了,订阅本专栏,关注后续更多的更新~