一、本文介绍

本文带来的改进机制是 MLCA(Mixed local channel attention) 翻译来就是 混合局部通道注意力 ,它结合了局部和全局特征以及通道和空间特征的信息,根据文章的内容来看他是一个 轻量化 的注意力机制,能够在增加少量参数量的情况下从而大幅度的提高检测精度(论文中是如此描述的),根据我的实验内容来看, 该注意力机制确实参数量非常少 ,效果也算不错, 而且官方的代码中提供了二次创新的思想和视频讲解非常推荐大家观看(文章内含二次创新C2PSA机制)。

二、MLCA的基本框架原理

官方论文地址: 官方论文地址,需要注意的是该文章为非开源需要花钱下载

官方代码地址: 官方代码地址

因为论文没有 开源 ,所以我只根据官方的图片来进行一个简单的分析。

这张图片描述了混合局部 通道注意力 (MLCA)的结构和工作原理。它结合了局部和全局特征以及通道和空间特征的信息。 下面我根据图片内容总结的它的工作流程:

1. 输入特征图(C,W,H)首先被局部平均池化(LAP)和全局平均池化(GAP)处理。局部池化关注局部区域的特征,而全局池化捕捉整个特征图的统计信息。

2. 局部池化后的特征和全局池化后的特征都经过一个1D卷积(Conv1d)来进行特征转换。此处的1D卷积用于压缩特征通道,同时保持空间维度不变。

3. 经过1D卷积后,特征被重新排列(Reshape),使其适应后续操作。

4. 对局部池化后的特征,使用1D卷积后进行重新排列,然后通过“乘法”操作(X)与原始输入特征相结合。这个过程相当于一种特征选择,强化了对有用特征的关注。

5. 对全局池化后的特征,经过1D卷积和重新排列后,通过“加法”操作与局部池化特征相结合。这个步骤在特征图中融合了全局上下文信息。

6. 最后,经过局部和全局注意力处理的特征图再次通过反池化(UNAP)操作,恢复到原始的空间维度。

7. 右侧的框图提供了MLCA的高级流程图,展示了从输入到输出的整体处理步骤。

总体来说: MLCA模块是为了在保持计算效率的同时,增强网络对于有用特征的捕捉能力。通过在局部和全局层面上结合通道和空间注意力,MLCA从而提高精度。

三、MLCA的核心代码

该代码的使用方式含章节四

- import torch

- import torch.nn as nn

- import torch.nn.functional as F

- import math

- __all__ = ['C2PSA_MLCA', 'MLCA']

- class MLCA(nn.Module):

- def __init__(self, in_size, local_size=5, gamma=2, b=1, local_weight=0.5):

- super(MLCA, self).__init__()

- # ECA 计算方法

- self.local_size = local_size

- self.gamma = gamma

- self.b = b

- t = int(abs(math.log(in_size, 2) + self.b) / self.gamma) # eca gamma=2

- k = t if t % 2 else t + 1

- self.conv = nn.Conv1d(1, 1, kernel_size=k, padding=(k - 1) // 2, bias=False)

- self.conv_local = nn.Conv1d(1, 1, kernel_size=k, padding=(k - 1) // 2, bias=False)

- self.local_weight = local_weight

- self.local_arv_pool = nn.AdaptiveAvgPool2d(local_size)

- self.global_arv_pool = nn.AdaptiveAvgPool2d(1)

- def forward(self, x):

- local_arv = self.local_arv_pool(x)

- global_arv = self.global_arv_pool(local_arv)

- b, c, m, n = x.shape

- b_local, c_local, m_local, n_local = local_arv.shape

- # (b,c,local_size,local_size) -> (b,c,local_size*local_size)-> (b,local_size*local_size,c)-> (b,1,local_size*local_size*c)

- temp_local = local_arv.view(b, c_local, -1).transpose(-1, -2).reshape(b, 1, -1)

- temp_global = global_arv.view(b, c, -1).transpose(-1, -2)

- y_local = self.conv_local(temp_local)

- y_global = self.conv(temp_global)

- # (b,c,local_size,local_size) <- (b,c,local_size*local_size)<-(b,local_size*local_size,c) <- (b,1,local_size*local_size*c)

- y_local_transpose = y_local.reshape(b, self.local_size * self.local_size, c).transpose(-1, -2).view(b, c,

- self.local_size,

- self.local_size)

- # y_global_transpose = y_global.view(b, -1).transpose(-1, -2).unsqueeze(-1)

- y_global_transpose = y_global.view(b, -1).unsqueeze(-1).unsqueeze(-1) # 代码修正

- # print(y_global_transpose.size())

- # 反池化

- att_local = y_local_transpose.sigmoid()

- att_global = F.adaptive_avg_pool2d(y_global_transpose.sigmoid(), [self.local_size, self.local_size])

- # print(att_local.size())

- # print(att_global.size())

- att_all = F.adaptive_avg_pool2d(att_global * (1 - self.local_weight) + (att_local * self.local_weight), [m, n])

- # print(att_all.size())

- x = x * att_all

- return x

- def autopad(k, p=None, d=1): # kernel, padding, dilation

- """Pad to 'same' shape outputs."""

- if d > 1:

- k = d * (k - 1) + 1 if isinstance(k, int) else [d * (x - 1) + 1 for x in k] # actual kernel-size

- if p is None:

- p = k // 2 if isinstance(k, int) else [x // 2 for x in k] # auto-pad

- return p

- class Conv(nn.Module):

- """Standard convolution with args(ch_in, ch_out, kernel, stride, padding, groups, dilation, activation)."""

- default_act = nn.SiLU() # default activation

- def __init__(self, c1, c2, k=1, s=1, p=None, g=1, d=1, act=True):

- """Initialize Conv layer with given arguments including activation."""

- super().__init__()

- self.conv = nn.Conv2d(c1, c2, k, s, autopad(k, p, d), groups=g, dilation=d, bias=False)

- self.bn = nn.BatchNorm2d(c2)

- self.act = self.default_act if act is True else act if isinstance(act, nn.Module) else nn.Identity()

- def forward(self, x):

- """Apply convolution, batch normalization and activation to input tensor."""

- return self.act(self.bn(self.conv(x)))

- def forward_fuse(self, x):

- """Perform transposed convolution of 2D data."""

- return self.act(self.conv(x))

- class PSABlock(nn.Module):

- """

- PSABlock class implementing a Position-Sensitive Attention block for neural networks.

- This class encapsulates the functionality for applying multi-head attention and feed-forward neural network layers

- with optional shortcut connections.

- Attributes:

- attn (Attention): Multi-head attention module.

- ffn (nn.Sequential): Feed-forward neural network module.

- add (bool): Flag indicating whether to add shortcut connections.

- Methods:

- forward: Performs a forward pass through the PSABlock, applying attention and feed-forward layers.

- Examples:

- Create a PSABlock and perform a forward pass

- >>> psablock = PSABlock(c=128, attn_ratio=0.5, num_heads=4, shortcut=True)

- >>> input_tensor = torch.randn(1, 128, 32, 32)

- >>> output_tensor = psablock(input_tensor)

- """

- def __init__(self, c, attn_ratio=0.5, num_heads=4, shortcut=True) -> None:

- """Initializes the PSABlock with attention and feed-forward layers for enhanced feature extraction."""

- super().__init__()

- self.attn = MLCA(c)

- self.ffn = nn.Sequential(Conv(c, c * 2, 1), Conv(c * 2, c, 1, act=False))

- self.add = shortcut

- def forward(self, x):

- """Executes a forward pass through PSABlock, applying attention and feed-forward layers to the input tensor."""

- x = x + self.attn(x) if self.add else self.attn(x)

- x = x + self.ffn(x) if self.add else self.ffn(x)

- return x

- class C2PSA_MLCA(nn.Module):

- """

- C2PSA module with attention mechanism for enhanced feature extraction and processing.

- This module implements a convolutional block with attention mechanisms to enhance feature extraction and processing

- capabilities. It includes a series of PSABlock modules for self-attention and feed-forward operations.

- Attributes:

- c (int): Number of hidden channels.

- cv1 (Conv): 1x1 convolution layer to reduce the number of input channels to 2*c.

- cv2 (Conv): 1x1 convolution layer to reduce the number of output channels to c.

- m (nn.Sequential): Sequential container of PSABlock modules for attention and feed-forward operations.

- Methods:

- forward: Performs a forward pass through the C2PSA module, applying attention and feed-forward operations.

- Notes:

- This module essentially is the same as PSA module, but refactored to allow stacking more PSABlock modules.

- Examples:

- >>> c2psa = C2PSA(c1=256, c2=256, n=3, e=0.5)

- >>> input_tensor = torch.randn(1, 256, 64, 64)

- >>> output_tensor = c2psa(input_tensor)

- """

- def __init__(self, c1, c2, n=1, e=0.5):

- """Initializes the C2PSA module with specified input/output channels, number of layers, and expansion ratio."""

- super().__init__()

- assert c1 == c2

- self.c = int(c1 * e)

- self.cv1 = Conv(c1, 2 * self.c, 1, 1)

- self.cv2 = Conv(2 * self.c, c1, 1)

- self.m = nn.Sequential(*(PSABlock(self.c, attn_ratio=0.5, num_heads=self.c // 64) for _ in range(n)))

- def forward(self, x):

- """Processes the input tensor 'x' through a series of PSA blocks and returns the transformed tensor."""

- a, b = self.cv1(x).split((self.c, self.c), dim=1)

- b = self.m(b)

- return self.cv2(torch.cat((a, b), 1))

- if __name__ == "__main__":

- # Generating Sample image

- image_size = (1, 64, 240, 240)

- image = torch.rand(*image_size)

- # Model

- mobilenet_v1 = C2PSA_MLCA(64, 64)

- out = mobilenet_v1(image)

- print(out.size())

四、手把手教你添加MLCA

4.1 修改一

第一还是建立文件,我们找到如下 ultralytics /nn/modules文件夹下建立一个目录名字呢就是'Addmodules'文件夹( 用群内的文件的话已经有了无需新建) !然后在其内部建立一个新的py文件将核心代码复制粘贴进去即可。

4.2 修改二

第二步我们在该目录下创建一个新的py文件名字为'__init__.py'( 用群内的文件的话已经有了无需新建) ,然后在其内部导入我们的检测头如下图所示。

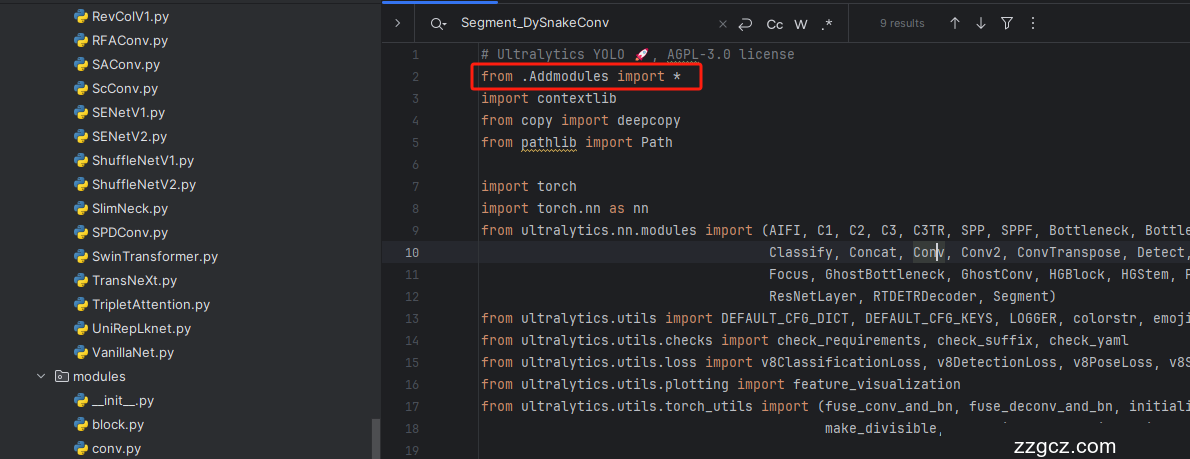

4.3 修改三

第三步我门中到如下文件'ultralytics/nn/tasks.py'进行导入和注册我们的模块( 用群内的文件的话已经有了无需重新导入直接开始第四步即可) !

从今天开始以后的教程就都统一成这个样子了,因为我默认大家用了我群内的文件来进行修改!!

4.4 修改四

按照我的添加在parse_model里添加即可。

到此就修改完成了,大家可以复制下面的yaml文件运行。

五、MLCA的yaml文件和运行记录

5.1 C2PSAMLCA的yaml文件

训练信息:YOLO11-C2PSA-MLCA summary: 312 layers, 2,543,397 parameters, 2,543,381 gradients, 6.4 GFLOPs

- # Ultralytics YOLO 🚀, AGPL-3.0 license

- # YOLO11 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect

- # Parameters

- nc: 80 # number of classes

- scales: # model compound scaling constants, i.e. 'model=yolo11n.yaml' will call yolo11.yaml with scale 'n'

- # [depth, width, max_channels]

- n: [0.50, 0.25, 1024] # summary: 319 layers, 2624080 parameters, 2624064 gradients, 6.6 GFLOPs

- s: [0.50, 0.50, 1024] # summary: 319 layers, 9458752 parameters, 9458736 gradients, 21.7 GFLOPs

- m: [0.50, 1.00, 512] # summary: 409 layers, 20114688 parameters, 20114672 gradients, 68.5 GFLOPs

- l: [1.00, 1.00, 512] # summary: 631 layers, 25372160 parameters, 25372144 gradients, 87.6 GFLOPs

- x: [1.00, 1.50, 512] # summary: 631 layers, 56966176 parameters, 56966160 gradients, 196.0 GFLOPs

- # YOLO11n backbone

- backbone:

- # [from, repeats, module, args]

- - [-1, 1, Conv, [64, 3, 2]] # 0-P1/2

- - [-1, 1, Conv, [128, 3, 2]] # 1-P2/4

- - [-1, 2, C3k2, [256, False, 0.25]]

- - [-1, 1, Conv, [256, 3, 2]] # 3-P3/8

- - [-1, 2, C3k2, [512, False, 0.25]]

- - [-1, 1, Conv, [512, 3, 2]] # 5-P4/16

- - [-1, 2, C3k2, [512, True]]

- - [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32

- - [-1, 2, C3k2, [1024, True]]

- - [-1, 1, SPPF, [1024, 5]] # 9

- - [-1, 2, C2PSA_MLCA, [1024]] # 10

- # YOLO11n head

- head:

- - [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- - [[-1, 6], 1, Concat, [1]] # cat backbone P4

- - [-1, 2, C3k2, [512, False]] # 13

- - [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- - [[-1, 4], 1, Concat, [1]] # cat backbone P3

- - [-1, 2, C3k2, [256, False]] # 16 (P3/8-small)

- - [-1, 1, Conv, [256, 3, 2]]

- - [[-1, 13], 1, Concat, [1]] # cat head P4

- - [-1, 2, C3k2, [512, False]] # 19 (P4/16-medium)

- - [-1, 1, Conv, [512, 3, 2]]

- - [[-1, 10], 1, Concat, [1]] # cat head P5

- - [-1, 2, C3k2, [1024, True]] # 22 (P5/32-large)

- - [[16, 19, 22], 1, Detect, [nc]] # Detect(P3, P4, P5)

5.2 MLCA的yaml文件

此版本训练信息:YOLO11-MLCA summary: 334 layers, 2,594,741 parameters, 2,594,725 gradients, 6.5 GFLOPs

- # Ultralytics YOLO 🚀, AGPL-3.0 license

- # YOLO11 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect

- # Parameters

- nc: 80 # number of classes

- scales: # model compound scaling constants, i.e. 'model=yolo11n.yaml' will call yolo11.yaml with scale 'n'

- # [depth, width, max_channels]

- n: [0.50, 0.25, 1024] # summary: 319 layers, 2624080 parameters, 2624064 gradients, 6.6 GFLOPs

- s: [0.50, 0.50, 1024] # summary: 319 layers, 9458752 parameters, 9458736 gradients, 21.7 GFLOPs

- m: [0.50, 1.00, 512] # summary: 409 layers, 20114688 parameters, 20114672 gradients, 68.5 GFLOPs

- l: [1.00, 1.00, 512] # summary: 631 layers, 25372160 parameters, 25372144 gradients, 87.6 GFLOPs

- x: [1.00, 1.50, 512] # summary: 631 layers, 56966176 parameters, 56966160 gradients, 196.0 GFLOPs

- # YOLO11n backbone

- backbone:

- # [from, repeats, module, args]

- - [-1, 1, Conv, [64, 3, 2]] # 0-P1/2

- - [-1, 1, Conv, [128, 3, 2]] # 1-P2/4

- - [-1, 2, C3k2, [256, False, 0.25]]

- - [-1, 1, Conv, [256, 3, 2]] # 3-P3/8

- - [-1, 2, C3k2, [512, False, 0.25]]

- - [-1, 1, Conv, [512, 3, 2]] # 5-P4/16

- - [-1, 2, C3k2, [512, True]]

- - [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32

- - [-1, 2, C3k2, [1024, True]]

- - [-1, 1, SPPF, [1024, 5]] # 9

- - [-1, 2, C2PSA, [1024]] # 10

- # YOLO11n head

- head:

- - [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- - [[-1, 6], 1, Concat, [1]] # cat backbone P4

- - [-1, 2, C3k2, [512, False]] # 13

- - [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- - [[-1, 4], 1, Concat, [1]] # cat backbone P3

- - [-1, 2, C3k2, [256, False]] # 16 (P3/8-small)

- - [-1, 1, MLCA, []] # 17 (P3/8-small) 小目标检测层输出位置增加注意力机制

- - [-1, 1, Conv, [256, 3, 2]]

- - [[-1, 13], 1, Concat, [1]] # cat head P4

- - [-1, 2, C3k2, [512, False]] # 20 (P4/16-medium)

- - [-1, 1, MLCA, []] # 21 (P4/16-medium) 中目标检测层输出位置增加注意力机制

- - [-1, 1, Conv, [512, 3, 2]]

- - [[-1, 10], 1, Concat, [1]] # cat head P5

- - [-1, 2, C3k2, [1024, True]] # 24 (P5/32-large)

- - [-1, 1, MLCA, []] # 25 (P5/32-large) 大目标检测层输出位置增加注意力机制

- # 具体在那一层用注意力机制可以根据自己的数据集场景进行选择。

- # 如果你自己配置注意力位置注意from[17, 21, 25]位置要对应上对应的检测层!

- - [[17, 21, 25], 1, Detect, [nc]] # Detect(P3, P4, P5)

5.3 训练代码

大家可以创建一个py文件将我给的代码复制粘贴进去,配置好自己的文件路径即可运行。

- import warnings

- warnings.filterwarnings('ignore')

- from ultralytics import YOLO

- if __name__ == '__main__':

- model = YOLO('ultralytics/cfg/models/v8/yolov8-C2f-FasterBlock.yaml')

- # model.load('yolov8n.pt') # loading pretrain weights

- model.train(data=r'替换数据集yaml文件地址',

- # 如果大家任务是其它的'ultralytics/cfg/default.yaml'找到这里修改task可以改成detect, segment, classify, pose

- cache=False,

- imgsz=640,

- epochs=150,

- single_cls=False, # 是否是单类别检测

- batch=4,

- close_mosaic=10,

- workers=0,

- device='0',

- optimizer='SGD', # using SGD

- # resume='', # 如过想续训就设置last.pt的地址

- amp=False, # 如果出现训练损失为Nan可以关闭amp

- project='runs/train',

- name='exp',

- )

5.4 MLCA的训练过程截图

六、本文总结

到此本文的正式分享内容就结束了,在这里给大家推荐我的YOLOv11改进有效涨点专栏,本专栏目前为新开的平均质量分98分,后期我会根据各种最新的前沿顶会进行论文复现,也会对一些老的改进机制进行补充, 目前本专栏免费阅读(暂时,大家尽早关注不迷路~) ,如果大家觉得本文帮助到你了,订阅本专栏,关注后续更多的更新~