一、本文介绍

本文给大家带来的改进机制是利用 2024-TPAMI 最新机制 FreqFusion二次创新BiFPN , 《Frequency-aware Feature Fusion for Dense Image Prediction》这篇文章的主要贡献是提出了一种新的特征融合方法(FreqFusion),旨在解决密集图像预测任务中的类别内不一致性和边界位移问题。本文将其和 BiFPN 进行结合实现二次创新BiFPN机制, 相比于原始的YOLOv11本文的内容可以达到一定的轻量化,本文的内容在作者的多类别数据集上实现了涨点。

二、原理介绍

官方论文地址: 官方论文地址点击此处即可跳转

官方代码地址: 官方代码地址点击此处即可跳转

《Frequency-aware Feature Fusion for Dense Image Prediction》这篇文章的主要贡献是提出了一种新的特征融合方法,旨在解决密集图像预测任务中的类别内不一致性和边界位移问题。文章中的核心概念较多,以下是简要的总结和理解:

问题定义:

密集图像预测任务(例如语义分割、目标检测和实例分割)依赖于高精度的类别信息和空间边界。但传统的特征融合方法在类别内特征一致性和边界保留上表现不佳,容易导致类别内不一致(类别内部不同部分特征差异大)和边界模糊。解决方案——FreqFusion:

文章提出了一种**频率感知特征融合(FreqFusion) ,它通过三个主要组件来提升融合效果:

1. 自适应低通滤波器(ALPF) 生成器 :该模块通过生成空间可变的低通滤波器,平滑高层特征,减少类别内不一致。

2. 偏移生成器:通过重新采样,将类别一致性较高的特征替换掉不一致的特征,进一步增强边界的清晰度。

3. 自适应高通滤波器(AHPF)生成器:用于增强在下采样过程中丢失的高频信息,提升边界细节。方法优势:

提升类别内一致性:通过ALPF组件减少了对象内部特征的波动,提升了类别内的相似度。

边界优化:通过偏移生成器和AHPF组件修正了对象边界,使得边界更加清晰。

广泛的适用性:该方法在多个任务上验证了其有效性,如语义分割、目标检测和实例分割。实验结果:

在语义分割任务中,FreqFusion相比现有方法在多个数据集(如Cityscapes和ADE20K)上有显著的提升,例如在ADE20K上比现有最优方法提升了2.8 mIoU。

在目标检测任务中,使用Faster R-CNN的FreqFusion版本在MS COCO数据集 上提升了1.8 AP。

实例分割和全景分割任务中,也实现了显著的 性能 提升。总结:

FreqFusion通过结合自适应低通和高通滤波器,解决了标准特征融合中的类别内不一致性和边界模糊问题,在多个 计算机视觉 任务上提升了预测性能。

三、核心代码

核心代码使用方式看章节四!

- # TPAMI 2024:Frequency-aware Feature Fusion for Dense Image Prediction

- import torch

- import torch.nn as nn

- import torch.nn.functional as F

- from mmcv.ops.carafe import normal_init, xavier_init, carafe

- import warnings

- import numpy as np

- __all__ = ['FreqFusion']

- def normal_init(module, mean=0, std=1, bias=0):

- if hasattr(module, 'weight') and module.weight is not None:

- nn.init.normal_(module.weight, mean, std)

- if hasattr(module, 'bias') and module.bias is not None:

- nn.init.constant_(module.bias, bias)

- def constant_init(module, val, bias=0):

- if hasattr(module, 'weight') and module.weight is not None:

- nn.init.constant_(module.weight, val)

- if hasattr(module, 'bias') and module.bias is not None:

- nn.init.constant_(module.bias, bias)

- def resize(input,

- size=None,

- scale_factor=None,

- mode='nearest',

- align_corners=None,

- warning=True):

- if warning:

- if size is not None and align_corners:

- input_h, input_w = tuple(int(x) for x in input.shape[2:])

- output_h, output_w = tuple(int(x) for x in size)

- if output_h > input_h or output_w > input_w:

- if ((output_h > 1 and output_w > 1 and input_h > 1

- and input_w > 1) and (output_h - 1) % (input_h - 1)

- and (output_w - 1) % (input_w - 1)):

- warnings.warn(

- f'When align_corners={align_corners}, '

- 'the output would more aligned if '

- f'input size {(input_h, input_w)} is `x+1` and '

- f'out size {(output_h, output_w)} is `nx+1`')

- return F.interpolate(input, size, scale_factor, mode, align_corners)

- def hamming2D(M, N):

- """

- 生成二维Hamming窗

- 参数:

- - M:窗口的行数

- - N:窗口的列数

- 返回:

- - 二维Hamming窗

- """

- # 生成水平和垂直方向上的Hamming窗

- # hamming_x = np.blackman(M)

- # hamming_x = np.kaiser(M)

- hamming_x = np.hamming(M)

- hamming_y = np.hamming(N)

- # 通过外积生成二维Hamming窗

- hamming_2d = np.outer(hamming_x, hamming_y)

- return hamming_2d

- class FreqFusion(nn.Module):

- def __init__(self,

- channels,

- scale_factor=1,

- lowpass_kernel=5,

- highpass_kernel=3,

- up_group=1,

- encoder_kernel=3,

- encoder_dilation=1,

- compressed_channels=64,

- align_corners=False,

- upsample_mode='nearest',

- feature_resample=False, # use offset generator or not

- feature_resample_group=4,

- comp_feat_upsample=True, # use ALPF & AHPF for init upsampling

- use_high_pass=True,

- use_low_pass=True,

- hr_residual=True,

- semi_conv=True,

- hamming_window=True, # for regularization, do not matter really

- feature_resample_norm=True,

- **kwargs):

- super().__init__()

- hr_channels, lr_channels = channels

- self.scale_factor = scale_factor

- self.lowpass_kernel = lowpass_kernel

- self.highpass_kernel = highpass_kernel

- self.up_group = up_group

- self.encoder_kernel = encoder_kernel

- self.encoder_dilation = encoder_dilation

- self.compressed_channels = compressed_channels

- self.hr_channel_compressor = nn.Conv2d(hr_channels, self.compressed_channels,1)

- self.lr_channel_compressor = nn.Conv2d(lr_channels, self.compressed_channels,1)

- self.content_encoder = nn.Conv2d( # ALPF generator

- self.compressed_channels,

- lowpass_kernel ** 2 * self.up_group * self.scale_factor * self.scale_factor,

- self.encoder_kernel,

- padding=int((self.encoder_kernel - 1) * self.encoder_dilation / 2),

- dilation=self.encoder_dilation,

- groups=1)

- self.align_corners = align_corners

- self.upsample_mode = upsample_mode

- self.hr_residual = hr_residual

- self.use_high_pass = use_high_pass

- self.use_low_pass = use_low_pass

- self.semi_conv = semi_conv

- self.feature_resample = feature_resample

- self.comp_feat_upsample = comp_feat_upsample

- if self.feature_resample:

- self.dysampler = LocalSimGuidedSampler(in_channels=compressed_channels, scale=2, style='lp', groups=feature_resample_group, use_direct_scale=True, kernel_size=encoder_kernel, norm=feature_resample_norm)

- if self.use_high_pass:

- self.content_encoder2 = nn.Conv2d( # AHPF generator

- self.compressed_channels,

- highpass_kernel ** 2 * self.up_group * self.scale_factor * self.scale_factor,

- self.encoder_kernel,

- padding=int((self.encoder_kernel - 1) * self.encoder_dilation / 2),

- dilation=self.encoder_dilation,

- groups=1)

- self.hamming_window = hamming_window

- lowpass_pad=0

- highpass_pad=0

- if self.hamming_window:

- self.register_buffer('hamming_lowpass', torch.FloatTensor(hamming2D(lowpass_kernel + 2 * lowpass_pad, lowpass_kernel + 2 * lowpass_pad))[None, None,])

- self.register_buffer('hamming_highpass', torch.FloatTensor(hamming2D(highpass_kernel + 2 * highpass_pad, highpass_kernel + 2 * highpass_pad))[None, None,])

- else:

- self.register_buffer('hamming_lowpass', torch.FloatTensor([1.0]))

- self.register_buffer('hamming_highpass', torch.FloatTensor([1.0]))

- self.init_weights()

- def init_weights(self):

- for m in self.modules():

- # print(m)

- if isinstance(m, nn.Conv2d):

- xavier_init(m, distribution='uniform')

- normal_init(self.content_encoder, std=0.001)

- if self.use_high_pass:

- normal_init(self.content_encoder2, std=0.001)

- def kernel_normalizer(self, mask, kernel, scale_factor=None, hamming=1):

- if scale_factor is not None:

- mask = F.pixel_shuffle(mask, self.scale_factor)

- n, mask_c, h, w = mask.size()

- mask_channel = int(mask_c / float(kernel**2))

- # mask = mask.view(n, mask_channel, -1, h, w)

- # mask = F.softmax(mask, dim=2, dtype=mask.dtype)

- # mask = mask.view(n, mask_c, h, w).contiguous()

- mask = mask.view(n, mask_channel, -1, h, w)

- mask = F.softmax(mask, dim=2, dtype=mask.dtype)

- mask = mask.view(n, mask_channel, kernel, kernel, h, w)

- mask = mask.permute(0, 1, 4, 5, 2, 3).view(n, -1, kernel, kernel)

- # mask = F.pad(mask, pad=[padding] * 4, mode=self.padding_mode) # kernel + 2 * padding

- mask = mask * hamming

- mask /= mask.sum(dim=(-1, -2), keepdims=True)

- # print(hamming)

- # print(mask.shape)

- mask = mask.view(n, mask_channel, h, w, -1)

- mask = mask.permute(0, 1, 4, 2, 3).view(n, -1, h, w).contiguous()

- return mask

- def forward(self, x):

- hr_feat, lr_feat = x

- compressed_hr_feat = self.hr_channel_compressor(hr_feat)

- compressed_lr_feat = self.lr_channel_compressor(lr_feat)

- if self.semi_conv:

- if self.comp_feat_upsample:

- if self.use_high_pass:

- mask_hr_hr_feat = self.content_encoder2(compressed_hr_feat)

- mask_hr_init = self.kernel_normalizer(mask_hr_hr_feat, self.highpass_kernel, hamming=self.hamming_highpass)

- compressed_hr_feat = compressed_hr_feat + compressed_hr_feat - carafe(compressed_hr_feat, mask_hr_init, self.highpass_kernel, self.up_group, 1)

- mask_lr_hr_feat = self.content_encoder(compressed_hr_feat)

- mask_lr_init = self.kernel_normalizer(mask_lr_hr_feat, self.lowpass_kernel, hamming=self.hamming_lowpass)

- mask_lr_lr_feat_lr = self.content_encoder(compressed_lr_feat)

- mask_lr_lr_feat = F.interpolate(

- carafe(mask_lr_lr_feat_lr, mask_lr_init, self.lowpass_kernel, self.up_group, 2), size=compressed_hr_feat.shape[-2:], mode='nearest')

- mask_lr = mask_lr_hr_feat + mask_lr_lr_feat

- mask_lr_init = self.kernel_normalizer(mask_lr, self.lowpass_kernel, hamming=self.hamming_lowpass)

- mask_hr_lr_feat = F.interpolate(

- carafe(self.content_encoder2(compressed_lr_feat), mask_lr_init, self.lowpass_kernel, self.up_group, 2), size=compressed_hr_feat.shape[-2:], mode='nearest')

- mask_hr = mask_hr_hr_feat + mask_hr_lr_feat

- else: raise NotImplementedError

- else:

- mask_lr = self.content_encoder(compressed_hr_feat) + F.interpolate(self.content_encoder(compressed_lr_feat), size=compressed_hr_feat.shape[-2:], mode='nearest')

- if self.use_high_pass:

- mask_hr = self.content_encoder2(compressed_hr_feat) + F.interpolate(self.content_encoder2(compressed_lr_feat), size=compressed_hr_feat.shape[-2:], mode='nearest')

- else:

- compressed_x = F.interpolate(compressed_lr_feat, size=compressed_hr_feat.shape[-2:], mode='nearest') + compressed_hr_feat

- mask_lr = self.content_encoder(compressed_x)

- if self.use_high_pass:

- mask_hr = self.content_encoder2(compressed_x)

- mask_lr = self.kernel_normalizer(mask_lr, self.lowpass_kernel, hamming=self.hamming_lowpass)

- if self.semi_conv:

- lr_feat = carafe(lr_feat, mask_lr, self.lowpass_kernel, self.up_group, 2)

- else:

- lr_feat = resize(

- input=lr_feat,

- size=hr_feat.shape[2:],

- mode=self.upsample_mode,

- align_corners=None if self.upsample_mode == 'nearest' else self.align_corners)

- lr_feat = carafe(lr_feat, mask_lr, self.lowpass_kernel, self.up_group, 1)

- if self.use_high_pass:

- mask_hr = self.kernel_normalizer(mask_hr, self.highpass_kernel, hamming=self.hamming_highpass)

- hr_feat_hf = hr_feat - carafe(hr_feat, mask_hr, self.highpass_kernel, self.up_group, 1)

- if self.hr_residual:

- # print('using hr_residual')

- hr_feat = hr_feat_hf + hr_feat

- else:

- hr_feat = hr_feat_hf

- if self.feature_resample:

- # print(lr_feat.shape)

- lr_feat = self.dysampler(hr_x=compressed_hr_feat,

- lr_x=compressed_lr_feat, feat2sample=lr_feat)

- return hr_feat + lr_feat

- class LocalSimGuidedSampler(nn.Module):

- """

- offset generator in FreqFusion

- """

- def __init__(self, in_channels, scale=2, style='lp', groups=4, use_direct_scale=True, kernel_size=1, local_window=3, sim_type='cos', norm=True, direction_feat='sim_concat'):

- super().__init__()

- assert scale==2

- assert style=='lp'

- self.scale = scale

- self.style = style

- self.groups = groups

- self.local_window = local_window

- self.sim_type = sim_type

- self.direction_feat = direction_feat

- if style == 'pl':

- assert in_channels >= scale ** 2 and in_channels % scale ** 2 == 0

- assert in_channels >= groups and in_channels % groups == 0

- if style == 'pl':

- in_channels = in_channels // scale ** 2

- out_channels = 2 * groups

- else:

- out_channels = 2 * groups * scale ** 2

- if self.direction_feat == 'sim':

- self.offset = nn.Conv2d(local_window**2 - 1, out_channels, kernel_size=kernel_size, padding=kernel_size//2)

- elif self.direction_feat == 'sim_concat':

- self.offset = nn.Conv2d(in_channels + local_window**2 - 1, out_channels, kernel_size=kernel_size, padding=kernel_size//2)

- else: raise NotImplementedError

- normal_init(self.offset, std=0.001)

- if use_direct_scale:

- if self.direction_feat == 'sim':

- self.direct_scale = nn.Conv2d(in_channels, out_channels, kernel_size=kernel_size, padding=kernel_size//2)

- elif self.direction_feat == 'sim_concat':

- self.direct_scale = nn.Conv2d(in_channels + local_window**2 - 1, out_channels, kernel_size=kernel_size, padding=kernel_size//2)

- else: raise NotImplementedError

- constant_init(self.direct_scale, val=0.)

- out_channels = 2 * groups

- if self.direction_feat == 'sim':

- self.hr_offset = nn.Conv2d(local_window**2 - 1, out_channels, kernel_size=kernel_size, padding=kernel_size//2)

- elif self.direction_feat == 'sim_concat':

- self.hr_offset = nn.Conv2d(in_channels + local_window**2 - 1, out_channels, kernel_size=kernel_size, padding=kernel_size//2)

- else: raise NotImplementedError

- normal_init(self.hr_offset, std=0.001)

- if use_direct_scale:

- if self.direction_feat == 'sim':

- self.hr_direct_scale = nn.Conv2d(in_channels, out_channels, kernel_size=kernel_size, padding=kernel_size//2)

- elif self.direction_feat == 'sim_concat':

- self.hr_direct_scale = nn.Conv2d(in_channels + local_window**2 - 1, out_channels, kernel_size=kernel_size, padding=kernel_size//2)

- else: raise NotImplementedError

- constant_init(self.hr_direct_scale, val=0.)

- self.norm = norm

- if self.norm:

- self.norm_hr = nn.GroupNorm(in_channels // 8, in_channels)

- self.norm_lr = nn.GroupNorm(in_channels // 8, in_channels)

- else:

- self.norm_hr = nn.Identity()

- self.norm_lr = nn.Identity()

- self.register_buffer('init_pos', self._init_pos())

- def _init_pos(self):

- h = torch.arange((-self.scale + 1) / 2, (self.scale - 1) / 2 + 1) / self.scale

- return torch.stack(torch.meshgrid([h, h])).transpose(1, 2).repeat(1, self.groups, 1).reshape(1, -1, 1, 1)

- def sample(self, x, offset, scale=None):

- if scale is None: scale = self.scale

- B, _, H, W = offset.shape

- offset = offset.view(B, 2, -1, H, W)

- coords_h = torch.arange(H) + 0.5

- coords_w = torch.arange(W) + 0.5

- coords = torch.stack(torch.meshgrid([coords_w, coords_h])

- ).transpose(1, 2).unsqueeze(1).unsqueeze(0).type(x.dtype).to(x.device)

- normalizer = torch.tensor([W, H], dtype=x.dtype, device=x.device).view(1, 2, 1, 1, 1)

- coords = 2 * (coords + offset) / normalizer - 1

- coords = F.pixel_shuffle(coords.view(B, -1, H, W), scale).view(

- B, 2, -1, scale * H, scale * W).permute(0, 2, 3, 4, 1).contiguous().flatten(0, 1)

- return F.grid_sample(x.reshape(B * self.groups, -1, x.size(-2), x.size(-1)), coords, mode='bilinear',

- align_corners=False, padding_mode="border").view(B, -1, scale * H, scale * W)

- def forward(self, hr_x, lr_x, feat2sample):

- hr_x = self.norm_hr(hr_x)

- lr_x = self.norm_lr(lr_x)

- if self.direction_feat == 'sim':

- hr_sim = compute_similarity(hr_x, self.local_window, dilation=2, sim='cos')

- lr_sim = compute_similarity(lr_x, self.local_window, dilation=2, sim='cos')

- elif self.direction_feat == 'sim_concat':

- hr_sim = torch.cat([hr_x, compute_similarity(hr_x, self.local_window, dilation=2, sim='cos')], dim=1)

- lr_sim = torch.cat([lr_x, compute_similarity(lr_x, self.local_window, dilation=2, sim='cos')], dim=1)

- hr_x, lr_x = hr_sim, lr_sim

- # offset = self.get_offset(hr_x, lr_x)

- offset = self.get_offset_lp(hr_x, lr_x, hr_sim, lr_sim)

- return self.sample(feat2sample, offset)

- # def get_offset_lp(self, hr_x, lr_x):

- def get_offset_lp(self, hr_x, lr_x, hr_sim, lr_sim):

- if hasattr(self, 'direct_scale'):

- # offset = (self.offset(lr_x) + F.pixel_unshuffle(self.hr_offset(hr_x), self.scale)) * (self.direct_scale(lr_x) + F.pixel_unshuffle(self.hr_direct_scale(hr_x), self.scale)).sigmoid() + self.init_pos

- offset = (self.offset(lr_sim) + F.pixel_unshuffle(self.hr_offset(hr_sim), self.scale)) * (self.direct_scale(lr_x) + F.pixel_unshuffle(self.hr_direct_scale(hr_x), self.scale)).sigmoid() + self.init_pos

- # offset = (self.offset(lr_sim) + F.pixel_unshuffle(self.hr_offset(hr_sim), self.scale)) * (self.direct_scale(lr_sim) + F.pixel_unshuffle(self.hr_direct_scale(hr_sim), self.scale)).sigmoid() + self.init_pos

- else:

- offset = (self.offset(lr_x) + F.pixel_unshuffle(self.hr_offset(hr_x), self.scale)) * 0.25 + self.init_pos

- return offset

- def get_offset(self, hr_x, lr_x):

- if self.style == 'pl':

- raise NotImplementedError

- return self.get_offset_lp(hr_x, lr_x)

- def compute_similarity(input_tensor, k=3, dilation=1, sim='cos'):

- """

- 计算输入张量中每一点与周围KxK范围内的点的余弦相似度。

- 参数:

- - input_tensor: 输入张量,形状为[B, C, H, W]

- - k: 范围大小,表示周围KxK范围内的点

- 返回:

- - 输出张量,形状为[B, KxK-1, H, W]

- """

- B, C, H, W = input_tensor.shape

- # 使用零填充来处理边界情况

- # padded_input = F.pad(input_tensor, (k // 2, k // 2, k // 2, k // 2), mode='constant', value=0)

- # 展平输入张量中每个点及其周围KxK范围内的点

- unfold_tensor = F.unfold(input_tensor, k, padding=(k // 2) * dilation, dilation=dilation) # B, CxKxK, HW

- # print(unfold_tensor.shape)

- unfold_tensor = unfold_tensor.reshape(B, C, k**2, H, W)

- # 计算余弦相似度

- if sim == 'cos':

- similarity = F.cosine_similarity(unfold_tensor[:, :, k * k // 2:k * k // 2 + 1], unfold_tensor[:, :, :], dim=1)

- elif sim == 'dot':

- similarity = unfold_tensor[:, :, k * k // 2:k * k // 2 + 1] * unfold_tensor[:, :, :]

- similarity = similarity.sum(dim=1)

- else:

- raise NotImplementedError

- # 移除中心点的余弦相似度,得到[KxK-1]的结果

- similarity = torch.cat((similarity[:, :k * k // 2], similarity[:, k * k // 2 + 1:]), dim=1)

- # 将结果重塑回[B, KxK-1, H, W]的形状

- similarity = similarity.view(B, k * k - 1, H, W)

- return similarity

四、添加方法

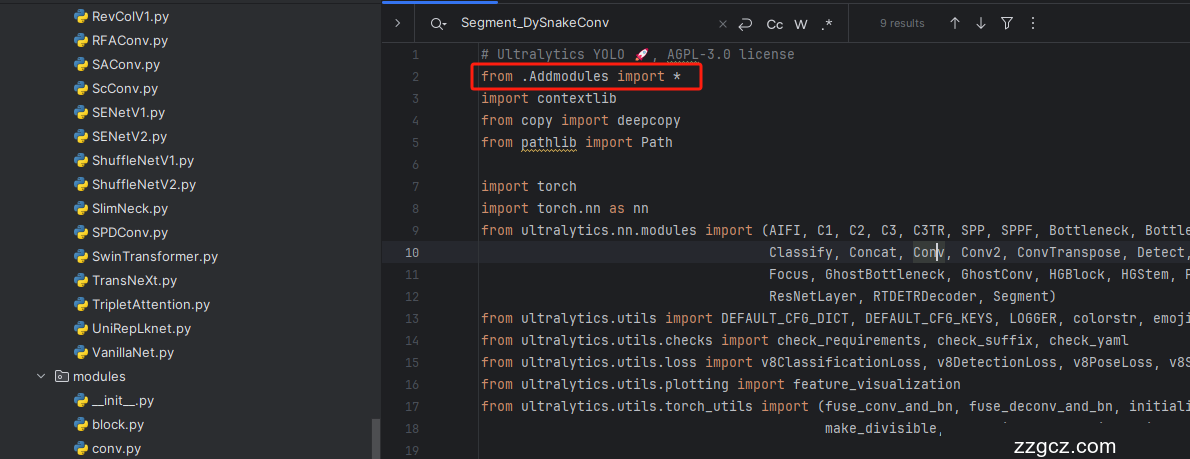

4.1 修改一

第一还是建立文件,我们找到如下 ultralytics /nn文件夹下建立一个目录名字呢就是'Addmodules'文件夹( 用群内的文件的话已经有了无需新建) !然后在其内部建立一个新的py文件将核心代码复制粘贴进去即可。

4.2 修改二

第二步我们在该目录下创建一个新的py文件名字为'__init__.py'( 用群内的文件的话已经有了无需新建) ,然后在其内部导入我们的检测头如下图所示。

4.3 修改三

第三步我门中到如下文件'ultralytics/nn/tasks.py'进行导入和注册我们的模块( 用群内的文件的话已经有了无需重新导入直接开始第四步即可) !

4.4 修改四

按照我的添加在parse_model里添加即可。

- elif m in {FreqFusion}:

- c2 = ch[f[0]]

- args = [[ch[x] for x in f], *args]

4.5 修改五

第五步我门中到如下文件'ultralytics/nn/tasks.py'进行修改,按照红框的位置进行定位,用我给的代码进行替换红框中的代码.

- try:

- m.stride = torch.tensor([s / x.shape[-2] for x in _forward(torch.zeros(1, ch, s, s))]) # forward on CPU

- except RuntimeError:

- try:

- self.model.to(torch.device('cuda'))

- m.stride = torch.tensor([s / x.shape[-2] for x in _forward(

- torch.zeros(1, ch, s, s).to(torch.device('cuda')))]) # forward on CUDA

- except RuntimeError as error:

- raise error

到此就修改完成了,大家可以复制下面的yaml文件运行。

五、正式训练

5.1 yaml文件

训练信息:YOLO11n-FreqFusion-BiFPN summary: 356 layers, 2,441,503 parameters, 2,441,487 gradients, 6.9 GFLOPs

注意:本文的机制需要关闭AMP训练否则会报错.

- # Ultralytics YOLO 🚀, AGPL-3.0 license

- # YOLO11 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect

- # Parameters

- nc: 80 # number of classes

- scales: # model compound scaling constants, i.e. 'model=yolo11n.yaml' will call yolo11.yaml with scale 'n'

- # [depth, width, max_channels]

- n: [0.50, 0.25, 1024] # summary: 319 layers, 2624080 parameters, 2624064 gradients, 6.6 GFLOPs

- s: [0.50, 0.50, 1024] # summary: 319 layers, 9458752 parameters, 9458736 gradients, 21.7 GFLOPs

- m: [0.50, 1.00, 512] # summary: 409 layers, 20114688 parameters, 20114672 gradients, 68.5 GFLOPs

- l: [1.00, 1.00, 512] # summary: 631 layers, 25372160 parameters, 25372144 gradients, 87.6 GFLOPs

- x: [1.00, 1.50, 512] # summary: 631 layers, 56966176 parameters, 56966160 gradients, 196.0 GFLOPs

- # YOLO11n backbone

- backbone:

- # [from, repeats, module, args]

- - [-1, 1, Conv, [64, 3, 2]] # 0-P1/2

- - [-1, 1, Conv, [128, 3, 2]] # 1-P2/4

- - [-1, 2, C3k2, [256, False, 0.25]]

- - [-1, 1, Conv, [256, 3, 2]] # 3-P3/8

- - [-1, 2, C3k2, [512, False, 0.25]]

- - [-1, 1, Conv, [512, 3, 2]] # 5-P4/16

- - [-1, 2, C3k2, [512, True]]

- - [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32

- - [-1, 2, C3k2, [1024, True]]

- - [-1, 1, SPPF, [1024, 5]] # 9

- - [-1, 2, C2PSA, [1024]] # 10

- # YOLO11n head

- head:

- - [4, 1, Conv, [256]] # 11-P3/8

- - [6, 1, Conv, [256]] # 12-P4/16

- - [10, 1, Conv, [256]] # 13-P5/32

- - [[12, -1], 1, FreqFusion, []] # 14

- - [-1, 2, C3k2, [256, False]] # 15-P4/16

- - [[11, -1], 1, FreqFusion, []] # 16

- - [-1, 2, C3k2, [256, False]] # 17-P3/8

- - [1, 1, Conv, [256, 3, 2]] # 18 P2->P3

- - [[-1, 11, 17], 1, Bi_FPN, []] # 19

- - [-1, 2, C3k2, [256, False]] # 20-P3/8

- - [-1, 1, Conv, [256, 3, 2]] # 21 P3->P4

- - [[-1, 12, 15], 1, Bi_FPN, []] # 22

- - [-1, 2, C3k2, [512, False]] # 23-P4/16

- - [-1, 1, Conv, [256, 3, 2]] # 24 P4->P5

- - [[-1, 13], 1, Bi_FPN, []] # 25

- - [-1, 2, C3k2, [1024, True]] # 26-P5/32

- - [[20, 23, 26], 1, Detect, [nc]] # Detect(P3, P4, P5)

5.2 训练代码

大家可以创建一个py文件将我给的代码复制粘贴进去,配置好自己的文件路径即可运行。

- import warnings

- warnings.filterwarnings('ignore')

- from ultralytics import YOLO

- if __name__ == '__main__':

- model = YOLO('yolov8-MLLA.yaml')

- # 如何切换模型版本, 上面的ymal文件可以改为 yolov8s.yaml就是使用的v8s,

- # 类似某个改进的yaml文件名称为yolov8-XXX.yaml那么如果想使用其它版本就把上面的名称改为yolov8l-XXX.yaml即可(改的是上面YOLO中间的名字不是配置文件的)!

- # model.load('yolov8n.pt') # 是否加载预训练权重,科研不建议大家加载否则很难提升精度

- model.train(data=r"C:\Users\Administrator\PycharmProjects\yolov5-master\yolov5-master\Construction Site Safety.v30-raw-images_latestversion.yolov8\data.yaml",

- # 如果大家任务是其它的'ultralytics/cfg/default.yaml'找到这里修改task可以改成detect, segment, classify, pose

- cache=False,

- imgsz=640,

- epochs=150,

- single_cls=False, # 是否是单类别检测

- batch=16,

- close_mosaic=0,

- workers=0,

- device='0',

- optimizer='SGD', # using SGD

- # resume='runs/train/exp21/weights/last.pt', # 如过想续训就设置last.pt的地址

- amp=False, # 如果出现训练损失为Nan可以关闭amp

- project='runs/train',

- name='exp',

- )

5.3 训练过程截图

五、本文总结

到此本文的正式分享内容就结束了,在这里给大家推荐我的YOLOv11改进有效涨点专栏,本专栏目前为新开的平均质量分98分,后期我会根据各种最新的前沿顶会进行论文复现,也会对一些老的改进机制进行补充,如果大家觉得本文帮助到你了,订阅本专栏,关注后续更多的更新~