一、本文介绍

本文给大家带来的改进机制是利用 Mamba 框架下的 MLLABlock二次创新C2f来改进我们的YOLOv11 模型 ,MLLA(Mamba-Like Linear Attention)的原理是通过将Mamba模型的一些核心设计融入线性 注意力机制 ,从而提升模型的性能。具体来说,MLLA主要整合了Mamba中的“忘记门”(forget gate)和模块设计(block design)这两个关键因素,同时MLLA通过使用位置编码(RoPE)来替代忘记门,从而在保持并行计算和快速推理速度的同时,提供必要的位置信息。这使得MLLA在处理非自回归的视觉任务时更加有效 , 本文内容为我独家整理全网首发。

二、原理介绍

官方论文地址: 官方论文地址点击此处即可跳转

官方代码地址: 官方代码地址点击此处即可跳转

在这篇论文中, MLLA(Mamba-Like Linear Attention) 的原理是通过将Mamba模型的一些核心设计融入线性注意力机制,从而提升模型的 性能 。具体来说,MLLA主要整合了Mamba中的“忘记门”(forget gate )和模块设计(block design)这两个关键因素,这些因素被认为是Mamba成功的主要原因。

以下是对MLLA原理的详细分析:

-

忘记门(Forget Gate) :

- 忘记门提供了局部偏差和位置信息。所有的忘记门元素严格限制在0到1之间,这意味着模型在接收到当前输入后会持续衰减先前的隐藏状态。这种特性确保了模型对输入序列的顺序敏感。

- 忘记门的局部偏差和位置信息对于图像处理任务来说非常重要,尽管引入忘记门会导致计算需要采用递归的形式,从而降低并行计算的效率 。

-

模块设计(Block Design) :

- Mamba的模块设计在保持相似的浮点运算次数(FLOPs)的同时,通过替换注意力子模块为线性注意力来提升性能。结果表明,采用这种模块设计能够显著提高模型的表现 。

-

线性注意力的改进 :

- 线性注意力被重新设计以整合忘记门和模块设计,这种改进后的模型被称为MLLA。实验结果显示,MLLA在图像分类和高分辨率密集预测任务中均优于各种视觉Mamba模型 。

-

并行计算和快速推理速度 :

- MLLA通过使用位置编码(RoPE)来替代忘记门,从而在保持并行计算和快速推理速度的同时,提供必要的位置信息。这使得MLLA在处理非自回归的视觉任务时更加有效 。

通过这些改进,MLLA不仅继承了Mamba模型的优点,还解决了其在并行计算中的一些局限性,使其更适合于视觉任务。MLLA展示了通过合理设计,线性注意力机制也能够超越传统的高性能模型。

三、核心代码

其中包含了上面提到的Rope,但是这个模块是经过我重新设计的,因为原先的代码需要输入图片的宽和高再定义时,但是经过重新设计后改为实时计算,有兴趣的可以和开源代码对比下!

- # --------------------------------------------------------

- # Swin Transformer

- # Copyright (c) 2021 Microsoft

- # Licensed under The MIT License [see LICENSE for details]

- # Written by Ze Liu

- # --------------------------------------------------------

- # Demystify Mamba in Vision: A Linear Attention Perspective

- # Modified by Dongchen Han

- # -----------------------------------------------------------------------

- import torch

- import torch.nn as nn

- __all__ = ['C3k2_MLLABlock1', 'C3k2_MLLABlock2']

- def drop_path(x, drop_prob: float = 0., training: bool = False, scale_by_keep: bool = True):

- """Drop paths (Stochastic Depth) per sample (when applied in main path of residual blocks).

- This is the same as the DropConnect impl I created for EfficientNet, etc networks, however,

- the original name is misleading as 'Drop Connect' is a different form of dropout in a separate paper...

- See discussion: https://github.com/tensorflow/tpu/issues/494#issuecomment-532968956 ... I've opted for

- changing the layer and argument names to 'drop path' rather than mix DropConnect as a layer name and use

- 'survival rate' as the argument.

- """

- if drop_prob == 0. or not training:

- return x

- keep_prob = 1 - drop_prob

- shape = (x.shape[0],) + (1,) * (x.ndim - 1) # work with diff dim tensors, not just 2D ConvNets

- random_tensor = x.new_empty(shape).bernoulli_(keep_prob)

- if keep_prob > 0.0 and scale_by_keep:

- random_tensor.div_(keep_prob)

- return x * random_tensor

- class DropPath(nn.Module):

- """Drop paths (Stochastic Depth) per sample (when applied in main path of residual blocks).

- """

- def __init__(self, drop_prob: float = 0., scale_by_keep: bool = True):

- super(DropPath, self).__init__()

- self.drop_prob = drop_prob

- self.scale_by_keep = scale_by_keep

- def forward(self, x):

- return drop_path(x, self.drop_prob, self.training, self.scale_by_keep)

- def extra_repr(self):

- return f'drop_prob={round(self.drop_prob,3):0.3f}'

- class Mlp(nn.Module):

- def __init__(self, in_features, hidden_features=None, out_features=None, act_layer=nn.GELU, drop=0.):

- super().__init__()

- out_features = out_features or in_features

- hidden_features = hidden_features or in_features

- self.fc1 = nn.Linear(in_features, hidden_features)

- self.act = act_layer()

- self.fc2 = nn.Linear(hidden_features, out_features)

- self.drop = nn.Dropout(drop)

- def forward(self, x):

- x = self.fc1(x)

- x = self.act(x)

- x = self.drop(x)

- x = self.fc2(x)

- x = self.drop(x)

- return x

- class ConvLayer(nn.Module):

- def __init__(self, in_channels, out_channels, kernel_size=3, stride=1, padding=0, dilation=1, groups=1,

- bias=True, dropout=0, norm=nn.BatchNorm2d, act_func=nn.ReLU):

- super(ConvLayer, self).__init__()

- self.dropout = nn.Dropout2d(dropout, inplace=False) if dropout > 0 else None

- self.conv = nn.Conv2d(

- in_channels,

- out_channels,

- kernel_size=(kernel_size, kernel_size),

- stride=(stride, stride),

- padding=(padding, padding),

- dilation=(dilation, dilation),

- groups=groups,

- bias=bias,

- )

- self.norm = norm(num_features=out_channels) if norm else None

- self.act = act_func() if act_func else None

- def forward(self, x: torch.Tensor) -> torch.Tensor:

- if self.dropout is not None:

- x = self.dropout(x)

- x = self.conv(x)

- if self.norm:

- x = self.norm(x)

- if self.act:

- x = self.act(x)

- return x

- class RoPE(torch.nn.Module):

- r"""Rotary Positional Embedding.

- """

- def __init__(self, base=10000):

- super(RoPE, self).__init__()

- self.base = base

- def generate_rotations(self, x):

- # 获取输入张量的形状

- *channel_dims, feature_dim = x.shape[1:-1][0], x.shape[-1]

- k_max = feature_dim // (2 * len(channel_dims))

- assert feature_dim % k_max == 0, "Feature dimension must be divisible by 2 * k_max"

- # 生成角度

- theta_ks = 1 / (self.base ** (torch.arange(k_max, dtype=x.dtype, device=x.device) / k_max))

- angles = torch.cat([t.unsqueeze(-1) * theta_ks for t in

- torch.meshgrid([torch.arange(d, dtype=x.dtype, device=x.device) for d in channel_dims],

- indexing='ij')], dim=-1)

- # 计算旋转矩阵的实部和虚部

- rotations_re = torch.cos(angles).unsqueeze(dim=-1)

- rotations_im = torch.sin(angles).unsqueeze(dim=-1)

- rotations = torch.cat([rotations_re, rotations_im], dim=-1)

- return rotations

- def forward(self, x):

- # 生成旋转矩阵

- rotations = self.generate_rotations(x)

- # 将 x 转换为复数形式

- x_complex = torch.view_as_complex(x.reshape(*x.shape[:-1], -1, 2))

- # 应用旋转矩阵

- pe_x = torch.view_as_complex(rotations) * x_complex

- # 将结果转换回实数形式并展平最后两个维度

- return torch.view_as_real(pe_x).flatten(-2)

- class LinearAttention(nn.Module):

- r""" Linear Attention with LePE and RoPE.

- Args:

- dim (int): Number of input channels.

- num_heads (int): Number of attention heads.

- qkv_bias (bool, optional): If True, add a learnable bias to query, key, value. Default: True

- """

- def __init__(self, dim, num_heads=4, qkv_bias=True, **kwargs):

- super().__init__()

- self.dim = dim

- self.num_heads = num_heads

- self.qk = nn.Linear(dim, dim * 2, bias=qkv_bias)

- self.elu = nn.ELU()

- self.lepe = nn.Conv2d(dim, dim, 3, padding=1, groups=dim)

- self.rope = RoPE()

- def forward(self, x):

- """

- Args:

- x: input features with shape of (B, N, C)

- """

- b, n, c = x.shape

- h = int(n ** 0.5)

- w = int(n ** 0.5)

- num_heads = self.num_heads

- head_dim = c // num_heads

- qk = self.qk(x).reshape(b, n, 2, c).permute(2, 0, 1, 3)

- q, k, v = qk[0], qk[1], x

- # q, k, v: b, n, c

- q = self.elu(q) + 1.0

- k = self.elu(k) + 1.0

- q_rope = self.rope(q.reshape(b, h, w, c)).reshape(b, n, num_heads, head_dim).permute(0, 2, 1, 3)

- k_rope = self.rope(k.reshape(b, h, w, c)).reshape(b, n, num_heads, head_dim).permute(0, 2, 1, 3)

- q = q.reshape(b, n, num_heads, head_dim).permute(0, 2, 1, 3)

- k = k.reshape(b, n, num_heads, head_dim).permute(0, 2, 1, 3)

- v = v.reshape(b, n, num_heads, head_dim).permute(0, 2, 1, 3)

- z = 1 / (q @ k.mean(dim=-2, keepdim=True).transpose(-2, -1) + 1e-6)

- kv = (k_rope.transpose(-2, -1) * (n ** -0.5)) @ (v * (n ** -0.5))

- x = q_rope @ kv * z

- x = x.transpose(1, 2).reshape(b, n, c)

- v = v.transpose(1, 2).reshape(b, h, w, c).permute(0, 3, 1, 2)

- x = x + self.lepe(v).permute(0, 2, 3, 1).reshape(b, n, c)

- return x

- def extra_repr(self) -> str:

- return f'dim={self.dim}, num_heads={self.num_heads}'

- class MLLABlock(nn.Module):

- r""" MLLA Block.

- Args:

- dim (int): Number of input channels.

- input_resolution (tuple[int]): Input resulotion.

- num_heads (int): Number of attention heads.

- mlp_ratio (float): Ratio of mlp hidden dim to embedding dim.

- qkv_bias (bool, optional): If True, add a learnable bias to query, key, value. Default: True

- drop (float, optional): Dropout rate. Default: 0.0

- drop_path (float, optional): Stochastic depth rate. Default: 0.0

- act_layer (nn.Module, optional): Activation layer. Default: nn.GELU

- norm_layer (nn.Module, optional): Normalization layer. Default: nn.LayerNorm

- """

- def __init__(self, dim, num_heads=4, mlp_ratio=4., qkv_bias=True, drop=0., drop_path=0.,

- act_layer=nn.GELU, norm_layer=nn.LayerNorm, **kwargs):

- super().__init__()

- self.dim = dim

- num_heads = max(1, dim // 64)

- self.num_heads = num_heads

- self.num_heads = num_heads

- self.mlp_ratio = mlp_ratio

- self.cpe1 = nn.Conv2d(dim, dim, 3, padding=1, groups=dim)

- self.norm1 = norm_layer(dim)

- self.in_proj = nn.Linear(dim, dim)

- self.act_proj = nn.Linear(dim, dim)

- self.dwc = nn.Conv2d(dim, dim, 3, padding=1, groups=dim)

- self.act = nn.SiLU()

- self.attn = LinearAttention(dim=dim, num_heads=num_heads, qkv_bias=qkv_bias)

- self.out_proj = nn.Linear(dim, dim)

- self.drop_path = DropPath(drop_path) if drop_path > 0. else nn.Identity()

- self.cpe2 = nn.Conv2d(dim, dim, 3, padding=1, groups=dim)

- self.norm2 = norm_layer(dim)

- self.mlp = Mlp(in_features=dim, hidden_features=int(dim * mlp_ratio), act_layer=act_layer, drop=drop)

- def forward(self, x):

- x = x.reshape((x.size(0), x.size(2) * x.size(3), x.size(1)))

- b, n, c = x.shape

- H = int(n ** 0.5)

- W = int(n ** 0.5)

- B, L, C = x.shape

- assert L == H * W, "input feature has wrong size"

- x = x + self.cpe1(x.reshape(B, H, W, C).permute(0, 3, 1, 2)).flatten(2).permute(0, 2, 1)

- shortcut = x

- x = self.norm1(x)

- act_res = self.act(self.act_proj(x))

- x = self.in_proj(x).view(B, H, W, C)

- x = self.act(self.dwc(x.permute(0, 3, 1, 2))).permute(0, 2, 3, 1).view(B, L, C)

- # Linear Attention

- x = self.attn(x)

- x = self.out_proj(x * act_res)

- x = shortcut + self.drop_path(x)

- x = x + self.cpe2(x.reshape(B, H, W, C).permute(0, 3, 1, 2)).flatten(2).permute(0, 2, 1)

- # FFN

- x = x + self.drop_path(self.mlp(self.norm2(x)))

- x = x.transpose(2, 1).reshape((b, c, H, W))

- return x

- def extra_repr(self) -> str:

- return f"dim={self.dim}, input_resolution={self.input_resolution}, num_heads={self.num_heads}, " \

- f"mlp_ratio={self.mlp_ratio}"

- def autopad(k, p=None, d=1): # kernel, padding, dilation

- """Pad to 'same' shape outputs."""

- if d > 1:

- k = d * (k - 1) + 1 if isinstance(k, int) else [d * (x - 1) + 1 for x in k] # actual kernel-size

- if p is None:

- p = k // 2 if isinstance(k, int) else [x // 2 for x in k] # auto-pad

- return p

- class Conv(nn.Module):

- """Standard convolution with args(ch_in, ch_out, kernel, stride, padding, groups, dilation, activation)."""

- default_act = nn.SiLU() # default activation

- def __init__(self, c1, c2, k=1, s=1, p=None, g=1, d=1, act=True):

- """Initialize Conv layer with given arguments including activation."""

- super().__init__()

- self.conv = nn.Conv2d(c1, c2, k, s, autopad(k, p, d), groups=g, dilation=d, bias=False)

- self.bn = nn.BatchNorm2d(c2)

- self.act = self.default_act if act is True else act if isinstance(act, nn.Module) else nn.Identity()

- def forward(self, x):

- """Apply convolution, batch normalization and activation to input tensor."""

- return self.act(self.bn(self.conv(x)))

- def forward_fuse(self, x):

- """Perform transposed convolution of 2D data."""

- return self.act(self.conv(x))

- class Bottleneck(nn.Module):

- """Standard bottleneck."""

- def __init__(self, c1, c2, shortcut=True, g=1, k=(3, 3), e=0.5):

- """Initializes a standard bottleneck module with optional shortcut connection and configurable parameters."""

- super().__init__()

- c_ = int(c2 * e) # hidden channels

- self.cv1 = Conv(c1, c_, k[0], 1)

- self.cv2 = Conv(c_, c2, k[1], 1, g=g)

- self.add = shortcut and c1 == c2

- def forward(self, x):

- """Applies the YOLO FPN to input data."""

- return x + self.cv2(self.cv1(x)) if self.add else self.cv2(self.cv1(x))

- class C2f(nn.Module):

- """Faster Implementation of CSP Bottleneck with 2 convolutions."""

- def __init__(self, c1, c2, n=1, shortcut=False, g=1, e=0.5):

- """Initializes a CSP bottleneck with 2 convolutions and n Bottleneck blocks for faster processing."""

- super().__init__()

- self.c = int(c2 * e) # hidden channels

- self.cv1 = Conv(c1, 2 * self.c, 1, 1)

- self.cv2 = Conv((2 + n) * self.c, c2, 1) # optional act=FReLU(c2)

- self.m = nn.ModuleList(Bottleneck(self.c, self.c, shortcut, g, k=((3, 3), (3, 3)), e=1.0) for _ in range(n))

- def forward(self, x):

- """Forward pass through C2f layer."""

- y = list(self.cv1(x).chunk(2, 1))

- y.extend(m(y[-1]) for m in self.m)

- return self.cv2(torch.cat(y, 1))

- def forward_split(self, x):

- """Forward pass using split() instead of chunk()."""

- y = list(self.cv1(x).split((self.c, self.c), 1))

- y.extend(m(y[-1]) for m in self.m)

- return self.cv2(torch.cat(y, 1))

- class C3(nn.Module):

- """CSP Bottleneck with 3 convolutions."""

- def __init__(self, c1, c2, n=1, shortcut=True, g=1, e=0.5):

- """Initialize the CSP Bottleneck with given channels, number, shortcut, groups, and expansion values."""

- super().__init__()

- c_ = int(c2 * e) # hidden channels

- self.cv1 = Conv(c1, c_, 1, 1)

- self.cv2 = Conv(c1, c_, 1, 1)

- self.cv3 = Conv(2 * c_, c2, 1) # optional act=FReLU(c2)

- self.m = nn.Sequential(*(Bottleneck(c_, c_, shortcut, g, k=((1, 1), (3, 3)), e=1.0) for _ in range(n)))

- def forward(self, x):

- """Forward pass through the CSP bottleneck with 2 convolutions."""

- return self.cv3(torch.cat((self.m(self.cv1(x)), self.cv2(x)), 1))

- class C3k(C3):

- """C3k is a CSP bottleneck module with customizable kernel sizes for feature extraction in neural networks."""

- def __init__(self, c1, c2, n=1, shortcut=True, g=1, e=0.5, k=3):

- """Initializes the C3k module with specified channels, number of layers, and configurations."""

- super().__init__(c1, c2, n, shortcut, g, e)

- c_ = int(c2 * e) # hidden channels

- # self.m = nn.Sequential(*(RepBottleneck(c_, c_, shortcut, g, k=(k, k), e=1.0) for _ in range(n)))

- self.m = nn.Sequential(*(Bottleneck(c_, c_, shortcut, g, k=(k, k), e=1.0) for _ in range(n)))

- class C3kMLLABlock(C3):

- """C3k is a CSP bottleneck module with customizable kernel sizes for feature extraction in neural networks."""

- def __init__(self, c1, c2, n=1, shortcut=True, g=1, e=0.5, k=3):

- """Initializes the C3k module with specified channels, number of layers, and configurations."""

- super().__init__(c1, c2, n, shortcut, g, e)

- c_ = int(c2 * e) # hidden channels

- # self.m = nn.Sequential(*(RepBottleneck(c_, c_, shortcut, g, k=(k, k), e=1.0) for _ in range(n)))

- self.m = nn.Sequential(*(MLLABlock(c_) for _ in range(n)))

- class C3k2_MLLABlock1(C2f):

- """Faster Implementation of CSP Bottleneck with 2 convolutions."""

- def __init__(self, c1, c2, n=1, c3k=False, e=0.5, g=1, shortcut=True):

- """Initializes the C3k2 module, a faster CSP Bottleneck with 2 convolutions and optional C3k blocks."""

- super().__init__(c1, c2, n, shortcut, g, e)

- self.m = nn.ModuleList(

- C3k(self.c, self.c, 2, shortcut, g) if c3k else MLLABlock(self.c,) for _ in range(n)

- )

- # 解析利用MLLABlock替换Bottneck

- class C3k2_MLLABlock2(C2f):

- """Faster Implementation of CSP Bottleneck with 2 convolutions."""

- def __init__(self, c1, c2, n=1, c3k=False, e=0.5, g=1, shortcut=True):

- """Initializes the C3k2 module, a faster CSP Bottleneck with 2 convolutions and optional C3k blocks."""

- super().__init__(c1, c2, n, shortcut, g, e)

- self.m = nn.ModuleList(

- C3kMLLABlock(self.c, self.c, 2, shortcut, g) if c3k else Bottleneck(self.c, self.c, shortcut, g) for _ in range(n)

- )

- # 解析利用MLLABlock替换C3k中的Bottneck

- # 区别在于不同的位置在yaml文件中使用.

- if __name__ == "__main__":

- # Generating Sample image

- image_size = (1, 64, 224, 224)

- image = torch.rand(*image_size)

- # Model

- model = C3k2_MLLABlock2(64, 64, 3, c3k=True)

- out = model(image)

- print(out.size())

四、手把手教你添加C3k2MLLABlock

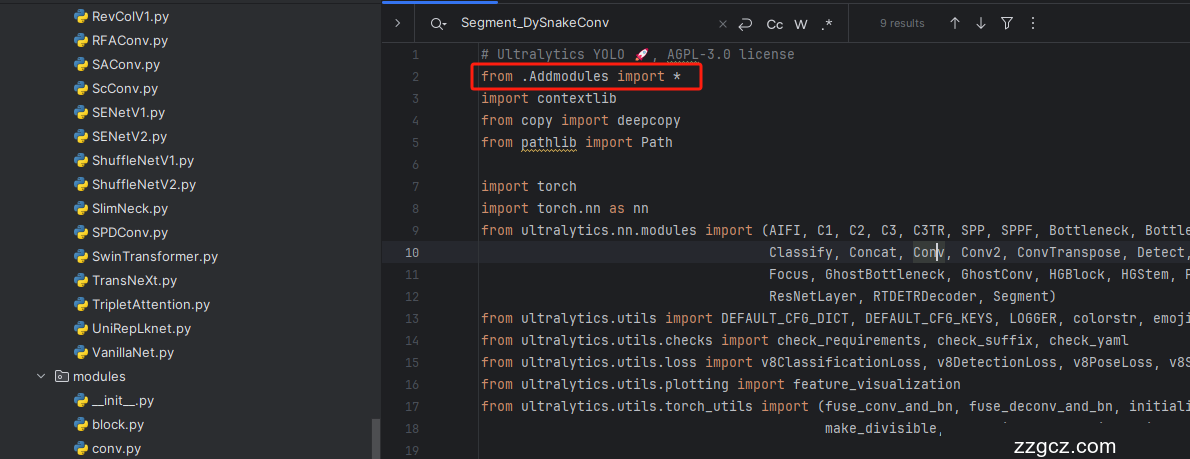

4.1 修改一

第一还是建立文件,我们找到如下 ultralytics /nn文件夹下建立一个目录名字呢就是'Addmodules'文件夹 (用群内的文件的话已经有了无需新建) !然后在其内部建立一个新的py文件将核心代码复制粘贴进去即可。

4.2 修改二

第二步我们在该目录下创建一个新的py文件名字为'__init__.py'( 用群内的文件的话已经有了无需新建) ,然后在其内部导入我们的检测头如下图所示。

4.3 修改三

第三步我门中到如下文件'ultralytics/nn/tasks.py'进行导入和注册我们的模块( 用群内的文件的话已经有了无需重新导入直接开始第四步即可) !

从今天开始以后的教程就都统一成这个样子了,因为我默认大家用了我群内的文件来进行修改!!

4.4 修改四

按照我的添加在parse_model里添加即可。

4.5 修改五

找到ultralytics/models/yolo/detect/train.py的DetectionTrainer class中的build_dataset函数中的rect=mode == 'val'改为rect=False

到此就修改完成了,大家可以复制下面的yaml文件运行。

五、C3k2MLLABlock的yaml文件和运行记录

5.1 C3k2MLLABlock的yaml文件1

此版本的信息:YOLO11-C3k2-MLLABlock-1 summary: 390 layers, 2,647,307 parameters, 2,647,291 gradients, 6.8 GFLOPs

- # Ultralytics YOLO 🚀, AGPL-3.0 license

- # YOLOv10 object detection model. For Usage examples see https://docs.ultralytics.com/tasks/detect

- # Parameters

- nc: 80 # number of classes

- scales: # model compound scaling constants, i.e. 'model=yolov10n.yaml' will call yolov10.yaml with scale 'n'

- # [depth, width, max_channels]

- n: [0.33, 0.25, 1024]

- backbone:

- # [from, repeats, module, args]

- - [-1, 1, Conv, [64, 3, 2]] # 0-P1/2

- - [-1, 1, Conv, [128, 3, 2]] # 1-P2/4

- - [-1, 3, C2fMLLABlock, [128, True]]

- - [-1, 1, Conv, [256, 3, 2]] # 3-P3/8

- - [-1, 6, C2fMLLABlock, [256, True]]

- - [-1, 1, SCDown, [512, 3, 2]] # 5-P4/16

- - [-1, 6, C2fMLLABlock, [512, True]]

- - [-1, 1, SCDown, [1024, 3, 2]] # 7-P5/32

- - [-1, 3, C2fMLLABlock, [1024, True]]

- - [-1, 1, SPPF, [1024, 5]] # 9

- - [-1, 1, PSA, [1024]] # 10

- # YOLOv10.0n head

- head:

- - [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- - [[-1, 6], 1, Concat, [1]] # cat backbone P4

- - [-1, 3, C2fMLLABlock, [512]] # 13

- - [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- - [[-1, 4], 1, Concat, [1]] # cat backbone P3

- - [-1, 3, C2fMLLABlock, [256]] # 16 (P3/8-small)

- - [-1, 1, Conv, [256, 3, 2]]

- - [[-1, 13], 1, Concat, [1]] # cat head P4

- - [-1, 3, C2fMLLABlock, [512]] # 19 (P4/16-medium)

- - [-1, 1, SCDown, [512, 3, 2]]

- - [[-1, 10], 1, Concat, [1]] # cat head P5

- - [-1, 3, C2fCIB, [1024, True, True]] # 22 (P5/32-large)

- - [[16, 19, 22], 1, v10Detect, [nc]] # Detect(P3, P4, P5)

5.1 C3k2MLLABlock的yaml文件2

此版本训练信息:YOLO11-C3k2-MLLABlock-2 summary: 404 layers, 2,518,555 parameters, 2,518,539 gradients, 6.4 GFLOPs

- # Ultralytics YOLO 🚀, AGPL-3.0 license

- # YOLO11 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect

- # Parameters

- nc: 80 # number of classes

- scales: # model compound scaling constants, i.e. 'model=yolo11n.yaml' will call yolo11.yaml with scale 'n'

- # [depth, width, max_channels]

- n: [0.50, 0.25, 1024] # summary: 319 layers, 2624080 parameters, 2624064 gradients, 6.6 GFLOPs

- s: [0.50, 0.50, 1024] # summary: 319 layers, 9458752 parameters, 9458736 gradients, 21.7 GFLOPs

- m: [0.50, 1.00, 512] # summary: 409 layers, 20114688 parameters, 20114672 gradients, 68.5 GFLOPs

- l: [1.00, 1.00, 512] # summary: 631 layers, 25372160 parameters, 25372144 gradients, 87.6 GFLOPs

- x: [1.00, 1.50, 512] # summary: 631 layers, 56966176 parameters, 56966160 gradients, 196.0 GFLOPs

- # YOLO11n backbone

- backbone:

- # [from, repeats, module, args]

- - [-1, 1, Conv, [64, 3, 2]] # 0-P1/2

- - [-1, 1, Conv, [128, 3, 2]] # 1-P2/4

- - [-1, 2, C3k2_MLLABlock2, [256, False, 0.25]]

- - [-1, 1, Conv, [256, 3, 2]] # 3-P3/8

- - [-1, 2, C3k2_MLLABlock2, [512, False, 0.25]]

- - [-1, 1, Conv, [512, 3, 2]] # 5-P4/16

- - [-1, 2, C3k2_MLLABlock2, [512, True]]

- - [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32

- - [-1, 2, C3k2_MLLABlock2, [1024, True]]

- - [-1, 1, SPPF, [1024, 5]] # 9

- - [-1, 2, C2PSA, [1024]] # 10

- # YOLO11n head

- head:

- - [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- - [[-1, 6], 1, Concat, [1]] # cat backbone P4

- - [-1, 2, C3k2_MLLABlock2, [512, False]] # 13

- - [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- - [[-1, 4], 1, Concat, [1]] # cat backbone P3

- - [-1, 2, C3k2_MLLABlock2, [256, False]] # 16 (P3/8-small)

- - [-1, 1, Conv, [256, 3, 2]]

- - [[-1, 13], 1, Concat, [1]] # cat head P4

- - [-1, 2, C3k2_MLLABlock2, [512, False]] # 19 (P4/16-medium)

- - [-1, 1, Conv, [512, 3, 2]]

- - [[-1, 10], 1, Concat, [1]] # cat head P5

- - [-1, 2, C3k2_MLLABlock2, [1024, True]] # 22 (P5/32-large)

- - [[16, 19, 22], 1, Detect, [nc]] # Detect(P3, P4, P5)

5.2 训练代码

大家可以创建一个py文件将我给的代码复制粘贴进去,配置好自己的文件路径即可运行。

- import warnings

- warnings.filterwarnings('ignore')

- from ultralytics import YOLO

- if __name__ == '__main__':

- model = YOLO('yolov8-MLLA.yaml')

- # 如何切换模型版本, 上面的ymal文件可以改为 yolov8s.yaml就是使用的v8s,

- # 类似某个改进的yaml文件名称为yolov8-XXX.yaml那么如果想使用其它版本就把上面的名称改为yolov8l-XXX.yaml即可(改的是上面YOLO中间的名字不是配置文件的)!

- # model.load('yolov8n.pt') # 是否加载预训练权重,科研不建议大家加载否则很难提升精度

- model.train(data=r"C:\Users\Administrator\PycharmProjects\yolov5-master\yolov5-master\Construction Site Safety.v30-raw-images_latestversion.yolov8\data.yaml",

- # 如果大家任务是其它的'ultralytics/cfg/default.yaml'找到这里修改task可以改成detect, segment, classify, pose

- cache=False,

- imgsz=640,

- epochs=150,

- single_cls=False, # 是否是单类别检测

- batch=16,

- close_mosaic=0,

- workers=0,

- device='0',

- optimizer='SGD', # using SGD

- # resume='runs/train/exp21/weights/last.pt', # 如过想续训就设置last.pt的地址

- amp=True, # 如果出现训练损失为Nan可以关闭amp

- project='runs/train',

- name='exp',

- )

5.3 C3k2MLLABlock的训练过程截图

五、本文总结

到此本文的正式分享内容就结束了,在这里给大家推荐我的YOLOv11改进有效涨点专栏,本专栏目前为新开的平均质量分98分,后期我会根据各种最新的前沿顶会进行论文复现,也会对一些老的改进机制进行补充,如果大家觉得本文帮助到你了,订阅本专栏,关注后续更多的更新~