一、本文介绍

本文给大家带来的改进机制是 CSWin Transformer , 其基于 Transformer 架构,创新性地引入了 交叉形窗口自注意力机制 ,用于有效地 并行 处理图像的水平和垂直条带,形成交叉形窗口以提高计算效率。它还提出了 局部增强位置编码(LePE) ,更好地处理局部位置信息,我将其替换YOLOv10的特征提取网络,用于提取更有用的特征。经过我的实验该主干网络确实能够涨点在大中小三种物体检测上, 同时该主干网络也提供多种版本 ,大家可以在 源代码 中进行修改版本的使用。 本文通过介绍其主要框架原理,然后教大家如何添加该网络结构到网络模型中。

(本文内容可根据yolov11的N、S、M、L、X进行二次缩放,轻量化更上一层)

二、CSWin Transformer原理

论文地址: 论文官方地址

代码地址: 官方代码地址

2.1 CSWin Transformer的基本原理

CSWin Transformer 基于Transformer架构,创新性地引入了 交叉形窗口自注意力机制 ,用于有效地 并行处理 图像的水平和垂直条带,形成交叉形窗口以提高计算效率。它还提出了 局部增强位置编码(LePE) ,更好地处理局部位置信息,支持任意输入分辨率,并对下游任务友好。这些创新使CSWin Transformer在视觉任务上,如图像分类和目标检测,显示出优于现有技术的 性能 。

CSWin Transformer 的基本原理 可以总结如下:

1. 交叉形窗口自注意力:

创新地采用了在水平和垂直方向上形成交叉形窗口的

自注意力机制

,提高了处理效率。

2. 局部增强位置编码(LePE):

新颖的

位置编码

方案,更好地处理局部位置信息,支持任意大小的输入分辨率。

3. 下游任务友好:

LePE使得CSWin Transformer尤其适用于各种后续视觉处理任务。

2.2 交叉形窗口自注意力

交叉形窗口自注意力 是CSWin Transformer的核心特征之一,它通过 将多头注意力分成两组来并行处理图像的水平和垂直条带 。这种机制允许 模型 在交叉的区域内聚焦重要的特征,同时限制了全局自注意力的高计算成本。这样不仅保持了局部和全局信息的平衡,而且还提高了处理速度和效率。

下图展示了 CSWin Transformer中不同自注意力机制的对比:

图解说明了CSWin Transformer如何通过在水平和垂直方向上拆分多头注意力,来并行处理形成交叉窗口结构。CSWin采用了一个创新的自注意力机制,通过将多头注意力拆分成两组来同时处理水平和垂直的条带,形成 交叉形窗口 。这种设计能够在计算成本和模型性能之间取得更好的平衡。图中展示了从全注意力到局部注意力的不同变体,以及CSWin特有的自注意力策略,这对于提高模型效率和精度都是至关重要的。

2.3 局部增强位置编码

局部增强位置编码(LePE) 是CSWin Transformer中的一种新型位置编码机制。它改善了现有编码方案处理局部位置信息的能力。与传统位置编码不同,LePE专门设计来 增强模型对于图像局部区域的感知能力 ,支持任意大小的输入分辨率。这使得CSWin Transformer在处理各种尺寸的输入图像时更为灵活和有效,特别适合各种视觉任务中的下游应用。

这张图展示了 CSWin Transformer的整体架构和其中一个CSWin Transformer块的细节 。

图中展示了 交叉形窗口自注意力 和 局部增强位置编码 这两种机制是如何集成在CSWin Transformer的不同阶段中,以及在单个Transformer块中的具体实现。这些设计共同支持了模型在进行视觉任务处理时的高效性和有效性。模型分为四个阶段,每个阶段由多个CSWin Transformer块组成,每个块包含了交叉形窗口自注意力和局部增强位置编码。随着阶段的推进,特征图的维度逐渐增大,通道数也相应增加,这允许网络逐渐捕获更复杂的特征。右侧详细描绘了一个CSWin Transformer块的内部结构,展示了MLP(多层感知机)、LN(层归一化)以及核心的交叉形窗口自注意力机制。

下面这张图 对比了不同的位置编码机制 ,如APE、CPE、RPE以及CSWin Transformer中采用的LePE。图中展示了 LePE是如何直接作用于自注意力机制中的V(值)部分 ,并且作为一个并行模块存在的。LePE的引入使得位置信息能够更有效地融入到自注意力计算中,与其他位置编码机制相比,它提供了对局部位置信息的更强处理能力。

LePE的设计允许位置信息更直接地融入到自注意力计算中,与传统的位置编码方法相比,LePE为模型提供了更精细的局部位置感知能力。这在处理视觉任务时是极其有益的,因为它帮助模型更好地理解图像中各个部分的相对位置关系。

2.4 下游任务友好

下游任务友好性 是指模型或技术易于被应用于特定任务的后续步骤或进一步的处理中。对于CSWin Transformer,其 局部增强位置编码(LePE)的设计 支持任意分辨率的输入,使得模型能够更容易地适应不同的视觉任务,如图像分类、目标检测和语义分割。这种灵活性意味着CSWin Transformer可以直接应用于各种不同分辨率的数据集,而无需进行复杂的重新调整或额外的预处理步骤,从而降低了对下游任务的应用难度。

三、CSwinTransformer的核心代码

代码使用方式看章节四

- # ------------------------------------------

- # CSWin Transformer

- # Copyright (c) Microsoft Corporation.

- # Licensed under the MIT License.

- # written By Xiaoyi Dong

- # ------------------------------------------

- import torch

- import torch.nn as nn

- from timm.data import IMAGENET_DEFAULT_MEAN, IMAGENET_DEFAULT_STD

- from timm.models.layers import DropPath, trunc_normal_

- from timm.models.registry import register_model

- from einops.layers.torch import Rearrange

- import torch.utils.checkpoint as checkpoint

- import numpy as np

- def _make_divisible(v, divisor, min_value=None):

- """

- This function is taken from the original tf repo.

- It ensures that all layers have a channel number that is divisible by 8

- It can be seen here:

- https://github.com/tensorflow/models/blob/master/research/slim/nets/mobilenet/mobilenet.py

- :param v:

- :param divisor:

- :param min_value:

- :return:

- """

- if min_value is None:

- min_value = divisor

- new_v = max(min_value, int(v + divisor / 2) // divisor * divisor)

- # Make sure that round down does not go down by more than 10%.

- if new_v < 0.9 * v:

- new_v += divisor

- return new_v

- def _cfg(url='', **kwargs):

- return {

- 'url': url,

- 'num_classes': 1000, 'input_size': (3, 640, 640), 'pool_size': None,

- 'crop_pct': .9, 'interpolation': 'bicubic',

- 'mean': IMAGENET_DEFAULT_MEAN, 'std': IMAGENET_DEFAULT_STD,

- 'first_conv': 'patch_embed.proj', 'classifier': 'head',

- **kwargs

- }

- default_cfgs = {

- 'cswin_224': _cfg(),

- 'cswin_384': _cfg(

- crop_pct=1.0

- ),

- }

- class Mlp(nn.Module):

- def __init__(self, in_features, hidden_features=None, out_features=None, act_layer=nn.GELU, drop=0.):

- super().__init__()

- out_features = out_features or in_features

- hidden_features = hidden_features or in_features

- self.fc1 = nn.Linear(in_features, hidden_features)

- self.act = act_layer()

- self.fc2 = nn.Linear(hidden_features, out_features)

- self.drop = nn.Dropout(drop)

- def forward(self, x):

- x = self.fc1(x)

- x = self.act(x)

- x = self.drop(x)

- x = self.fc2(x)

- x = self.drop(x)

- return x

- class LePEAttention(nn.Module):

- def __init__(self, dim, resolution, idx, split_size=7, dim_out=None, num_heads=8, attn_drop=0., proj_drop=0.,

- qk_scale=None):

- super().__init__()

- self.dim = dim

- self.dim_out = dim_out or dim

- self.resolution = resolution

- self.split_size = split_size

- self.num_heads = num_heads

- head_dim = dim // num_heads

- # NOTE scale factor was wrong in my original version, can set manually to be compat with prev weights

- self.scale = qk_scale or head_dim ** -0.5

- if idx == -1:

- H_sp, W_sp = self.resolution, self.resolution

- elif idx == 0:

- H_sp, W_sp = self.resolution, self.split_size

- elif idx == 1:

- W_sp, H_sp = self.resolution, self.split_size

- else:

- print("ERROR MODE", idx)

- exit(0)

- self.H_sp = H_sp

- self.W_sp = W_sp

- stride = 1

- self.get_v = nn.Conv2d(dim, dim, kernel_size=3, stride=1, padding=1, groups=dim)

- self.attn_drop = nn.Dropout(attn_drop)

- def im2cswin(self, x):

- B, N, C = x.shape

- H = W = int(np.sqrt(N))

- x = x.transpose(-2, -1).contiguous().view(B, C, H, W)

- x = img2windows(x, self.H_sp, self.W_sp)

- x = x.reshape(-1, self.H_sp * self.W_sp, self.num_heads, C // self.num_heads).permute(0, 2, 1, 3).contiguous()

- return x

- def get_lepe(self, x, func):

- B, N, C = x.shape

- H = W = int(np.sqrt(N))

- x = x.transpose(-2, -1).contiguous().view(B, C, H, W)

- H_sp, W_sp = self.H_sp, self.W_sp

- x = x.view(B, C, H // H_sp, H_sp, W // W_sp, W_sp)

- x = x.permute(0, 2, 4, 1, 3, 5).contiguous().reshape(-1, C, H_sp, W_sp) ### B', C, H', W'

- lepe = func(x) ### B', C, H', W'

- lepe = lepe.reshape(-1, self.num_heads, C // self.num_heads, H_sp * W_sp).permute(0, 1, 3, 2).contiguous()

- x = x.reshape(-1, self.num_heads, C // self.num_heads, self.H_sp * self.W_sp).permute(0, 1, 3, 2).contiguous()

- return x, lepe

- def forward(self, qkv):

- """

- x: B L C

- """

- q, k, v = qkv[0], qkv[1], qkv[2]

- ### Img2Window

- H = W = self.resolution

- B, L, C = q.shape

- assert L == H * W, "flatten img_tokens has wrong size"

- q = self.im2cswin(q)

- k = self.im2cswin(k)

- v, lepe = self.get_lepe(v, self.get_v)

- q = q * self.scale

- attn = (q @ k.transpose(-2, -1)) # B head N C @ B head C N --> B head N N

- attn = nn.functional.softmax(attn, dim=-1, dtype=attn.dtype)

- attn = self.attn_drop(attn)

- x = (attn @ v) + lepe

- x = x.transpose(1, 2).reshape(-1, self.H_sp * self.W_sp, C) # B head N N @ B head N C

- ### Window2Img

- x = windows2img(x, self.H_sp, self.W_sp, H, W).view(B, -1, C) # B H' W' C

- return x

- class CSWinBlock(nn.Module):

- def __init__(self, dim, reso, num_heads,

- split_size=7, mlp_ratio=4., qkv_bias=False, qk_scale=None,

- drop=0., attn_drop=0., drop_path=0.,

- act_layer=nn.GELU, norm_layer=nn.LayerNorm,

- last_stage=False):

- super().__init__()

- self.dim = dim

- self.num_heads = num_heads

- self.patches_resolution = reso

- self.split_size = split_size

- self.mlp_ratio = mlp_ratio

- self.qkv = nn.Linear(dim, dim * 3, bias=qkv_bias)

- self.norm1 = norm_layer(dim)

- if self.patches_resolution == split_size:

- last_stage = True

- if last_stage:

- self.branch_num = 1

- else:

- self.branch_num = 2

- self.proj = nn.Linear(dim, dim)

- self.proj_drop = nn.Dropout(drop)

- if last_stage:

- self.attns = nn.ModuleList([

- LePEAttention(

- dim, resolution=self.patches_resolution, idx=-1,

- split_size=split_size, num_heads=num_heads, dim_out=dim,

- qk_scale=qk_scale, attn_drop=attn_drop, proj_drop=drop)

- for i in range(self.branch_num)])

- else:

- self.attns = nn.ModuleList([

- LePEAttention(

- dim // 2, resolution=self.patches_resolution, idx=i,

- split_size=split_size, num_heads=num_heads // 2, dim_out=dim // 2,

- qk_scale=qk_scale, attn_drop=attn_drop, proj_drop=drop)

- for i in range(self.branch_num)])

- mlp_hidden_dim = int(dim * mlp_ratio)

- self.drop_path = DropPath(drop_path) if drop_path > 0. else nn.Identity()

- self.mlp = Mlp(in_features=dim, hidden_features=mlp_hidden_dim, out_features=dim, act_layer=act_layer,

- drop=drop)

- self.norm2 = norm_layer(dim)

- def forward(self, x):

- """

- x: B, H*W, C

- """

- H = W = self.patches_resolution

- B, L, C = x.shape

- assert L == H * W, "flatten img_tokens has wrong size"

- img = self.norm1(x)

- qkv = self.qkv(img).reshape(B, -1, 3, C).permute(2, 0, 1, 3)

- if self.branch_num == 2:

- x1 = self.attns[0](qkv[:, :, :, :C // 2])

- x2 = self.attns[1](qkv[:, :, :, C // 2:])

- attened_x = torch.cat([x1, x2], dim=2)

- else:

- attened_x = self.attns[0](qkv)

- attened_x = self.proj(attened_x)

- x = x + self.drop_path(attened_x)

- x = x + self.drop_path(self.mlp(self.norm2(x)))

- return x

- def img2windows(img, H_sp, W_sp):

- """

- img: B C H W

- """

- B, C, H, W = img.shape

- img_reshape = img.view(B, C, H // H_sp, H_sp, W // W_sp, W_sp)

- img_perm = img_reshape.permute(0, 2, 4, 3, 5, 1).contiguous().reshape(-1, H_sp * W_sp, C)

- return img_perm

- def windows2img(img_splits_hw, H_sp, W_sp, H, W):

- """

- img_splits_hw: B' H W C

- """

- B = int(img_splits_hw.shape[0] / (H * W / H_sp / W_sp))

- img = img_splits_hw.view(B, H // H_sp, W // W_sp, H_sp, W_sp, -1)

- img = img.permute(0, 1, 3, 2, 4, 5).contiguous().view(B, H, W, -1)

- return img

- class Merge_Block(nn.Module):

- def __init__(self, dim, dim_out, norm_layer=nn.LayerNorm):

- super().__init__()

- self.conv = nn.Conv2d(dim, dim_out, 3, 2, 1)

- self.norm = norm_layer(dim_out)

- def forward(self, x):

- B, new_HW, C = x.shape

- H = W = int(np.sqrt(new_HW))

- x = x.transpose(-2, -1).contiguous().view(B, C, H, W)

- x = self.conv(x)

- B, C = x.shape[:2]

- x = x.view(B, C, -1).transpose(-2, -1).contiguous()

- x = self.norm(x)

- return x

- class CSWinTransformer(nn.Module):

- """ Vision Transformer with support for patch or hybrid CNN input stage

- """

- def __init__(self, factor=0.5, depth_factor=0.5, img_size=640, patch_size=16, in_chans=3, num_classes=1000,

- embed_dim=96, depth=[2, 2, 6, 2],

- split_size=[3, 5, 7],

- num_heads=12, mlp_ratio=4., qkv_bias=True, qk_scale=None, drop_rate=0., attn_drop_rate=0.,

- drop_path_rate=0., hybrid_backbone=None, norm_layer=nn.LayerNorm, use_chk=False):

- super().__init__()

- embed_dim = int(embed_dim * factor)

- depth = [max(1, int(dim * depth_factor)) for dim in depth]

- self.use_chk = use_chk

- self.num_classes = num_classes

- self.num_features = self.embed_dim = embed_dim # num_features for consistency with other models

- heads = num_heads

- self.stage1_conv_embed = nn.Sequential(

- nn.Conv2d(in_chans, embed_dim, 7, 4, 2),

- Rearrange('b c h w -> b (h w) c', h=img_size // 4, w=img_size // 4),

- nn.LayerNorm(embed_dim)

- )

- curr_dim = embed_dim

- dpr = [x.item() for x in torch.linspace(0, drop_path_rate, np.sum(depth))] # stochastic depth decay rule

- self.stage1 = nn.ModuleList([

- CSWinBlock(

- dim=curr_dim, num_heads=heads[0], reso=img_size // 4, mlp_ratio=mlp_ratio,

- qkv_bias=qkv_bias, qk_scale=qk_scale, split_size=split_size[0],

- drop=drop_rate, attn_drop=attn_drop_rate,

- drop_path=dpr[i], norm_layer=norm_layer)

- for i in range(depth[0])])

- self.merge1 = Merge_Block(curr_dim, curr_dim * 2)

- curr_dim = curr_dim * 2

- self.stage2 = nn.ModuleList(

- [CSWinBlock(

- dim=curr_dim, num_heads=heads[1], reso=img_size // 8, mlp_ratio=mlp_ratio,

- qkv_bias=qkv_bias, qk_scale=qk_scale, split_size=split_size[1],

- drop=drop_rate, attn_drop=attn_drop_rate,

- drop_path=dpr[np.sum(depth[:1]) + i], norm_layer=norm_layer)

- for i in range(depth[1])])

- self.merge2 = Merge_Block(curr_dim, curr_dim * 2)

- curr_dim = curr_dim * 2

- temp_stage3 = []

- temp_stage3.extend(

- [CSWinBlock(

- dim=curr_dim, num_heads=heads[2], reso=img_size // 16, mlp_ratio=mlp_ratio,

- qkv_bias=qkv_bias, qk_scale=qk_scale, split_size=split_size[2],

- drop=drop_rate, attn_drop=attn_drop_rate,

- drop_path=dpr[np.sum(depth[:2]) + i], norm_layer=norm_layer)

- for i in range(depth[2])])

- self.stage3 = nn.ModuleList(temp_stage3)

- self.merge3 = Merge_Block(curr_dim, curr_dim * 2)

- curr_dim = curr_dim * 2

- self.stage4 = nn.ModuleList(

- [CSWinBlock(

- dim=curr_dim, num_heads=heads[3], reso=img_size // 32, mlp_ratio=mlp_ratio,

- qkv_bias=qkv_bias, qk_scale=qk_scale, split_size=split_size[-1],

- drop=drop_rate, attn_drop=attn_drop_rate,

- drop_path=dpr[np.sum(depth[:-1]) + i], norm_layer=norm_layer, last_stage=True)

- for i in range(depth[-1])])

- self.norm = norm_layer(curr_dim)

- # Classifier head

- self.head = nn.Linear(curr_dim, num_classes) if num_classes > 0 else nn.Identity()

- trunc_normal_(self.head.weight, std=0.02)

- self.apply(self._init_weights)

- self.width_list = [i.size(1) for i in self.forward(torch.randn(1, 3, 640, 640))]

- def _init_weights(self, m):

- if isinstance(m, nn.Linear):

- trunc_normal_(m.weight, std=.02)

- if isinstance(m, nn.Linear) and m.bias is not None:

- nn.init.constant_(m.bias, 0)

- elif isinstance(m, (nn.LayerNorm, nn.BatchNorm2d)):

- nn.init.constant_(m.bias, 0)

- nn.init.constant_(m.weight, 1.0)

- @torch.jit.ignore

- def no_weight_decay(self):

- return {'pos_embed', 'cls_token'}

- def get_classifier(self):

- return self.head

- def reset_classifier(self, num_classes, global_pool=''):

- if self.num_classes != num_classes:

- print('reset head to', num_classes)

- self.num_classes = num_classes

- self.head = nn.Linear(self.out_dim, num_classes) if num_classes > 0 else nn.Identity()

- self.head = self.head.cuda()

- trunc_normal_(self.head.weight, std=.02)

- if self.head.bias is not None:

- nn.init.constant_(self.head.bias, 0)

- def forward(self, x):

- B = x.shape[0]

- x = self.stage1_conv_embed(x)

- unique_tensors = {}

- for blk in self.stage1:

- if self.use_chk:

- x = checkpoint.checkpoint(blk, x)

- else:

- x = blk(x)

- y = x.reshape((x.size(0), x.size(2), int(x.size(1) ** 0.5), int(x.size(1) ** 0.5)))

- width, height = y.shape[2], y.shape[3]

- unique_tensors[(width, height)] = y

- for pre, blocks in zip([self.merge1, self.merge2, self.merge3],

- [self.stage2, self.stage3, self.stage4]):

- x = pre(x)

- for blk in blocks:

- if self.use_chk:

- x = checkpoint.checkpoint(blk, x)

- y = x.reshape((x.size(0), x.size(2), int(x.size(1) ** 0.5), int(x.size(1) ** 0.5)))

- width, height = y.shape[2], y.shape[3]

- unique_tensors[(width, height)] = y

- else:

- x = blk(x)

- y = x.reshape((x.size(0), x.size(2), int(x.size(1) ** 0.5), int(x.size(1) ** 0.5)))

- width, height = y.shape[2], y.shape[3]

- unique_tensors[(width, height)] = y

- result_list = list(unique_tensors.values())[-4:]

- return result_list

- def _conv_filter(state_dict, patch_size=16):

- """ convert patch embedding weight from manual patchify + linear proj to conv"""

- out_dict = {}

- for k, v in state_dict.items():

- if 'patch_embed.proj.weight' in k:

- v = v.reshape((v.shape[0], 3, patch_size, patch_size))

- out_dict[k] = v

- return out_dict

- ### 224 models

- @register_model

- def CSWin_64_12211_tiny_224(factor, depth_factor, **kwargs):

- model = CSWinTransformer(factor=factor, depth_factor=depth_factor, patch_size=4, embed_dim=64, depth=[1, 2, 21, 1],

- split_size=[1, 2, 8, 8], num_heads=[2, 4, 8, 16], mlp_ratio=4., **kwargs)

- model.default_cfg = default_cfgs['cswin_224']

- return model

- @register_model

- def CSWin_64_24322_small_224(factor, depth_factor, **kwargs):

- model = CSWinTransformer(factor=factor, depth_factor=depth_factor, patch_size=4, embed_dim=64, depth=[2, 4, 32, 2],

- split_size=[1, 2, 8, 8], num_heads=[2, 4, 8, 16], mlp_ratio=4., **kwargs)

- model.default_cfg = default_cfgs['cswin_224']

- return model

- @register_model

- def CSWin_96_24322_base_224(factor, depth_factor, **kwargs):

- model = CSWinTransformer(actor=factor, depth_factor=depth_factor, patch_size=4, embed_dim=96, depth=[2, 4, 32, 2],

- split_size=[1, 2, 8, 8], num_heads=[4, 8, 16, 32], mlp_ratio=4., **kwargs)

- model.default_cfg = default_cfgs['cswin_224']

- return model

- @register_model

- def CSWin_144_24322_large_224(factor, depth_factor, **kwargs):

- model = CSWinTransformer(actor=factor, depth_factor=depth_factor, patch_size=4, embed_dim=144, depth=[2, 4, 32, 2],

- split_size=[1, 2, 8, 8], num_heads=[6, 12, 24, 24], mlp_ratio=4., **kwargs)

- model.default_cfg = default_cfgs['cswin_224']

- return model

- if __name__ == '__main__':

- model = CSWin_64_12211_tiny_224(factor=0.25)

- inputs = torch.randn((1, 3, 640, 640))

- for i in model(inputs):

- print(i.size())

四、手把手教你添加CSwinTransformer机制

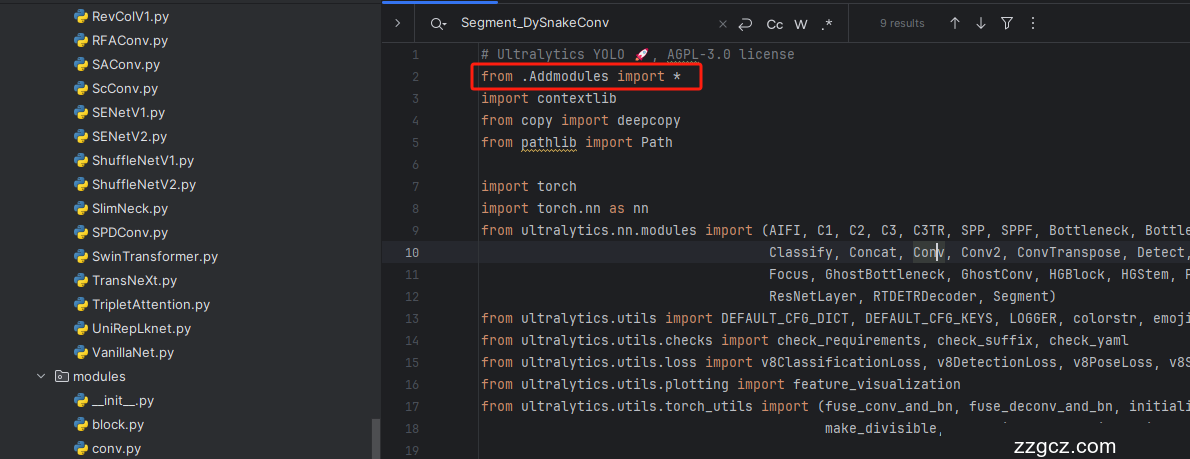

4.1 修改一

第一步还是建立文件,我们找到如下 ultralytics /nn文件夹下建立一个目录名字呢就是'Addmodules'文件夹( 用群内的文件的话已经有了无需新建) !然后在其内部建立一个新的py文件将核心代码复制粘贴进去即可

4.2 修改二

第二步我们在该目录下创建一个新的py文件名字为'__init__.py'( 用群内的文件的话已经有了无需新建) ,然后在其内部导入我们的检测头如下图所示。

4.3 修改三

第三步我门中到如下文件'ultralytics/nn/tasks.py'进行导入和注册我们的模块( 用群内的文件的话已经有了无需重新导入直接开始第四步即可) !

从今天开始以后的教程就都统一成这个样子了,因为我默认大家用了我群内的文件来进行修改!!

4.4 修改四

添加如下两行代码!!!

4.5 修改五

找到七百多行大概把具体看图片,按照图片来修改就行,添加红框内的部分,注意没有()只是函数名。

- elif m in {自行添加对应的模型即可,下面都是一样的}:

- m = m(*args)

- c2 = m.width_list # 返回通道列表

- backbone = True

4.6 修改六

下面的两个红框内都是需要改动的。

- if isinstance(c2, list):

- m_ = m

- m_.backbone = True

- else:

- m_ = nn.Sequential(*(m(*args) for _ in range(n))) if n > 1 else m(*args) # module

- t = str(m)[8:-2].replace('__main__.', '') # module type

- m.np = sum(x.numel() for x in m_.parameters()) # number params

- m_.i, m_.f, m_.type = i + 4 if backbone else i, f, t # attach index, 'from' index, type

4.7 修改七

如下的也需要修改,全部按照我的来。

代码如下把原先的代码替换了即可。

- if verbose:

- LOGGER.info(f'{i:>3}{str(f):>20}{n_:>3}{m.np:10.0f} {t:<45}{str(args):<30}') # print

- save.extend(x % (i + 4 if backbone else i) for x in ([f] if isinstance(f, int) else f) if x != -1) # append to savelist

- layers.append(m_)

- if i == 0:

- ch = []

- if isinstance(c2, list):

- ch.extend(c2)

- if len(c2) != 5:

- ch.insert(0, 0)

- else:

- ch.append(c2)

4.8 修改八

修改八和前面的都不太一样,需要修改前向传播中的一个部分, 已经离开了parse_model方法了。

可以在图片中开代码行数,没有离开task.py文件都是同一个文件。 同时这个部分有好几个前向传播都很相似,大家不要看错了, 是70多行左右的!!!,同时我后面提供了代码,大家直接复制粘贴即可,有时间我针对这里会出一个视频。

代码如下->

- def _predict_once(self, x, profile=False, visualize=False, embed=None):

- """

- Perform a forward pass through the network.

- Args:

- x (torch.Tensor): The input tensor to the model.

- profile (bool): Print the computation time of each layer if True, defaults to False.

- visualize (bool): Save the feature maps of the model if True, defaults to False.

- embed (list, optional): A list of feature vectors/embeddings to return.

- Returns:

- (torch.Tensor): The last output of the model.

- """

- y, dt, embeddings = [], [], [] # outputs

- for m in self.model:

- if m.f != -1: # if not from previous layer

- x = y[m.f] if isinstance(m.f, int) else [x if j == -1 else y[j] for j in m.f] # from earlier layers

- if profile:

- self._profile_one_layer(m, x, dt)

- if hasattr(m, 'backbone'):

- x = m(x)

- if len(x) != 5: # 0 - 5

- x.insert(0, None)

- for index, i in enumerate(x):

- if index in self.save:

- y.append(i)

- else:

- y.append(None)

- x = x[-1] # 最后一个输出传给下一层

- else:

- x = m(x) # run

- y.append(x if m.i in self.save else None) # save output

- if visualize:

- feature_visualization(x, m.type, m.i, save_dir=visualize)

- if embed and m.i in embed:

- embeddings.append(nn.functional.adaptive_avg_pool2d(x, (1, 1)).squeeze(-1).squeeze(-1)) # flatten

- if m.i == max(embed):

- return torch.unbind(torch.cat(embeddings, 1), dim=0)

- return x

到这里就完成了修改部分,但是这里面细节很多,大家千万要注意不要替换多余的代码,导致报错,也不要拉下任何一部,都会导致运行失败,而且报错很难排查!!!很难排查!!!

注意!!! 额外的修改!

关注我的其实都知道,我大部分的修改都是一样的,这个网络需要额外的修改一步,就是s一个参数,将下面的s改为640!!!即可完美运行!!

打印计算量问题解决方案

我们找到如下文件'ultralytics/utils/torch_utils.py'按照如下的图片进行修改,否则容易打印不出来计算量。

注意事项!!!

如果大家在验证的时候报错形状不匹配的错误可以固定验证集的图片尺寸,方法如下 ->

找到下面这个文件ultralytics/ models /yolo/detect/train.py然后其中有一个类是DetectionTrainer class中的build_dataset函数中的一个参数rect=mode == 'val'改为rect=False

五、CSwinTransformer的yaml文件

复制如下yaml文件进行运行!!!

5.1 CSwinTransformer 的yaml文件版本1

此版本训练信息:YOLO11-CSWinTransformer summary: 472 layers, 2,486,455 parameters, 2,486,439 gradients, 5.9 GFLOPs

使用说明:# 下面 [-1, 1, LSKNet, [0.25,0.5]] 参数位置的0.25是通道放缩的系数, YOLOv11N是0.25 YOLOv11S是0.5 YOLOv11M是1. YOLOv11l是1 YOLOv11是1.5大家根据自己训练的YOLO版本设定即可.

# 0.5对应的是模型的深度系数

# 本文支持版本有 [ 'CSWin_64_12211_tiny_224', "CSWin_64_24322_small_224", "CSWin_96_24322_base_224", "CSWin_144_24322_large_224"]

- # Ultralytics YOLO 🚀, AGPL-3.0 license

- # YOLO11 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect

- # Parameters

- nc: 80 # number of classes

- scales: # model compound scaling constants, i.e. 'model=yolo11n.yaml' will call yolo11.yaml with scale 'n'

- # [depth, width, max_channels]

- n: [0.50, 0.25, 1024] # summary: 319 layers, 2624080 parameters, 2624064 gradients, 6.6 GFLOPs

- s: [0.50, 0.50, 1024] # summary: 319 layers, 9458752 parameters, 9458736 gradients, 21.7 GFLOPs

- m: [0.50, 1.00, 512] # summary: 409 layers, 20114688 parameters, 20114672 gradients, 68.5 GFLOPs

- l: [1.00, 1.00, 512] # summary: 631 layers, 25372160 parameters, 25372144 gradients, 87.6 GFLOPs

- x: [1.00, 1.50, 512] # summary: 631 layers, 56966176 parameters, 56966160 gradients, 196.0 GFLOPs

- # 下面 [-1, 1, CSWin_64_12211_tiny_224, [0.25,0.5]] 参数位置的0.25是通道放缩的系数, YOLOv11N是0.25 YOLOv11S是0.5 YOLOv11M是1. YOLOv11l是1 YOLOv11是1.5大家根据自己训练的YOLO版本设定即可.

- # 0.5对应的是模型的深度系数

- # 本文支持版本有 __all__ = [ 'CSWin_64_12211_tiny_224', "CSWin_64_24322_small_224", "CSWin_96_24322_base_224", "CSWin_144_24322_large_224"]

- # YOLO11n backbone

- backbone:

- # [from, repeats, module, args]

- - [-1, 1, CSWin_64_12211_tiny_224, [0.25, 0.5]] # 0-4 P1/2 这里是四层大家不要被yaml文件限制住了思维,不会画图进群看视频.

- - [-1, 1, SPPF, [1024, 5]] # 5

- - [-1, 2, C2PSA, [1024]] # 6

- # YOLO11n head

- head:

- - [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- - [[-1, 3], 1, Concat, [1]] # cat backbone P4

- - [-1, 2, C3k2, [512, False]] # 9

- - [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- - [[-1, 2], 1, Concat, [1]] # cat backbone P3

- - [-1, 2, C3k2, [256, False]] # 12 (P3/8-small)

- - [-1, 1, Conv, [256, 3, 2]]

- - [[-1, 9], 1, Concat, [1]] # cat head P4

- - [-1, 2, C3k2, [512, False]] # 15 (P4/16-medium)

- - [-1, 1, Conv, [512, 3, 2]]

- - [[-1, 6], 1, Concat, [1]] # cat head P5

- - [-1, 2, C3k2, [1024, True]] # 18 (P5/32-large)

- - [[12, 15, 18], 1, Detect, [nc]] # Detect(P3, P4, P5)

5.2 训练文件

- import warnings

- warnings.filterwarnings('ignore')

- from ultralytics import YOLO

- if __name__ == '__main__':

- model = YOLO('ultralytics/cfg/models/v8/yolov8-C2f-FasterBlock.yaml')

- # model.load('yolov8n.pt') # loading pretrain weights

- model.train(data=r'替换数据集yaml文件地址',

- # 如果大家任务是其它的'ultralytics/cfg/default.yaml'找到这里修改task可以改成detect, segment, classify, pose

- cache=False,

- imgsz=640,

- epochs=150,

- single_cls=False, # 是否是单类别检测

- batch=4,

- close_mosaic=10,

- workers=0,

- device='0',

- optimizer='SGD', # using SGD

- # resume='', # 如过想续训就设置last.pt的地址

- amp=False, # 如果出现训练损失为Nan可以关闭amp

- project='runs/train',

- name='exp',

- )

六、成功运行记录

下面是成功运行的截图,已经完成了有1个epochs的训练,图片太大截不全第2个epochs,这里改完之后打印出了点问题,但是不影响任何功能,后期我找时间修复一下这个问题。

七、本文总结

到此本文的正式分享内容就结束了,在这里给大家推荐我的YOLOv11改进有效涨点专栏,本专栏目前为新开的平均质量分98分,后期我会根据各种最新的前沿顶会进行论文复现,也会对一些老的改进机制进行补充, 目前本专栏免费阅读(暂时,大家尽早关注不迷路~) ,如果大家觉得本文帮助到你了,订阅本专栏,关注后续更多的更新~