一、本文介绍

本文给大家带来的改进机制是 单阶段 盲真实 图像去噪 网络RIDNet,RIDNet(Real Image Denoising with Feature Attention)是一个用于真实图像去噪的卷积神经网络( CNN ),旨在解决现有去噪方法在处理真实噪声图像时 性能 受限的问题。通过单阶段结构和特征注意机制,RIDNet在多种数据集上展示了其优越性,下面的图片为其效果图片包括和其它图像图像网络的对比图。

欢迎大家订阅我的专栏一起学习YOLO!

二、RIDNet 网络的原理和机制

官方论文地址: 官方论文地址点击此处即可跳转

官方代码地址:

官方代码地址点击此处即可跳转

RIDNet(Real Image Denoising with Feature Attention )是一个用于真实图像去噪的 卷积神经网络 (CNN),旨在解决现有去噪方法在处理真实噪声图像时性能受限的问题。通过单阶段结构和特征注意机制,RIDNet在多种数据集上展示了其优越性。 RIDNet由三个主要模块组成:

1. 特征提取模块(Feature Extraction Module): 该模块包含一个卷积层,旨在从输入的噪声图像中提取初始特征。

2. 特征学习模块(Feature Learning Module): 核心部分是增强注意模块(Enhanced Attention Module,EAM),使用残差在残差结构(Residual on Residual)和特征注意机制来增强特征学习能力。 EAM包括两个主要部分:

(1)特征提取子模块:通过两个膨胀卷积层和一个合并卷积层提取和学习特征。

(2)特征注意子模块:使用全局平均池化和自门控机制生成特征注意力,调整每个通道的特征权重,以突出重要特征。

3. 重建模块(Reconstruction Module): 包含一个卷积层,将学习到的特征重建为去噪后的图像。

结论: RIDNet在多个合成和真实噪声数据集上进行了广泛的实验,展示了其在定量指标(如PSNR)和视觉质量上的优越性。与现有最先进的算法相比,RIDNet在处理合成噪声和真实噪声图像时均表现出色。RIDNet通过引入特征注意机制和残差在残差结构,实现了对真实图像去噪的有效处理。其单阶段结构、跳跃连接和特征注意机制确保了高效的特征学习和信息传递,使其在多个数据集上均取得了优异的性能。

三、核心代码

这个代码基础版本原先有1000+GFLOPs,我将其Block层数优化了一些,并将通道数减少了一部分将参数量降低到了20+。

- import math

- import torch

- import torch.nn as nn

- import torch.nn.functional as F

- def default_conv(in_channels, out_channels, kernel_size, bias=True):

- return nn.Conv2d(

- in_channels, out_channels, kernel_size,

- padding=(kernel_size//2), bias=bias)

- class MeanShift(nn.Conv2d):

- def __init__(self, rgb_range, rgb_mean, rgb_std, sign=-1):

- super(MeanShift, self).__init__(3, 3, kernel_size=1)

- std = torch.Tensor(rgb_std)

- self.weight.data = torch.eye(3).view(3, 3, 1, 1)

- self.weight.data.div_(std.view(3, 1, 1, 1))

- self.bias.data = sign * rgb_range * torch.Tensor(rgb_mean)

- self.bias.data.div_(std)

- self.requires_grad = False

- def init_weights(modules):

- pass

- class Merge_Run(nn.Module):

- def __init__(self,

- in_channels, out_channels,

- ksize=3, stride=1, pad=1, dilation=1):

- super(Merge_Run, self).__init__()

- self.body1 = nn.Sequential(

- nn.Conv2d(in_channels, out_channels, ksize, stride, pad),

- nn.ReLU(inplace=True)

- )

- self.body2 = nn.Sequential(

- nn.Conv2d(in_channels, out_channels, ksize, stride, 2, 2),

- nn.ReLU(inplace=True)

- )

- self.body3 = nn.Sequential(

- nn.Conv2d(in_channels * 2, out_channels, ksize, stride, pad),

- nn.ReLU(inplace=True)

- )

- init_weights(self.modules)

- def forward(self, x):

- out1 = self.body1(x)

- out2 = self.body2(x)

- c = torch.cat([out1, out2], dim=1)

- c_out = self.body3(c)

- out = c_out + x

- return out

- class Merge_Run_dual(nn.Module):

- def __init__(self,

- in_channels, out_channels,

- ksize=3, stride=1, pad=1, dilation=1):

- super(Merge_Run_dual, self).__init__()

- self.body1 = nn.Sequential(

- nn.Conv2d(in_channels, out_channels, ksize, stride, pad),

- nn.ReLU(inplace=True),

- nn.Conv2d(in_channels, out_channels, ksize, stride, 2, 2),

- nn.ReLU(inplace=True)

- )

- self.body2 = nn.Sequential(

- nn.Conv2d(in_channels, out_channels, ksize, stride, 3, 3),

- nn.ReLU(inplace=True),

- nn.Conv2d(in_channels, out_channels, ksize, stride, 4, 4),

- nn.ReLU(inplace=True)

- )

- self.body3 = nn.Sequential(

- nn.Conv2d(in_channels * 2, out_channels, ksize, stride, pad),

- nn.ReLU(inplace=True)

- )

- init_weights(self.modules)

- def forward(self, x):

- out1 = self.body1(x)

- out2 = self.body2(x)

- c = torch.cat([out1, out2], dim=1)

- c_out = self.body3(c)

- out = c_out + x

- return out

- class BasicBlock(nn.Module):

- def __init__(self,

- in_channels, out_channels,

- ksize=3, stride=1, pad=1):

- super(BasicBlock, self).__init__()

- self.body = nn.Sequential(

- nn.Conv2d(in_channels, out_channels, ksize, stride, pad),

- nn.ReLU(inplace=True)

- )

- init_weights(self.modules)

- def forward(self, x):

- out = self.body(x)

- return out

- class BasicBlockSig(nn.Module):

- def __init__(self,

- in_channels, out_channels,

- ksize=3, stride=1, pad=1):

- super(BasicBlockSig, self).__init__()

- self.body = nn.Sequential(

- nn.Conv2d(in_channels, out_channels, ksize, stride, pad),

- nn.Sigmoid()

- )

- init_weights(self.modules)

- def forward(self, x):

- out = self.body(x)

- return out

- class ResidualBlock(nn.Module):

- def __init__(self,

- in_channels, out_channels):

- super(ResidualBlock, self).__init__()

- self.body = nn.Sequential(

- nn.Conv2d(in_channels, out_channels, 3, 1, 1),

- nn.ReLU(inplace=True),

- nn.Conv2d(out_channels, out_channels, 3, 1, 1),

- )

- init_weights(self.modules)

- def forward(self, x):

- out = self.body(x)

- out = F.relu(out + x)

- return out

- class EResidualBlock(nn.Module):

- def __init__(self,

- in_channels, out_channels,

- group=1):

- super(EResidualBlock, self).__init__()

- self.body = nn.Sequential(

- nn.Conv2d(in_channels, out_channels, 3, 1, 1, groups=group),

- nn.ReLU(inplace=True),

- nn.Conv2d(out_channels, out_channels, 3, 1, 1, groups=group),

- nn.ReLU(inplace=True),

- nn.Conv2d(out_channels, out_channels, 1, 1, 0),

- )

- init_weights(self.modules)

- def forward(self, x):

- out = self.body(x)

- out = F.relu(out + x)

- return out

- class CALayer(nn.Module):

- def __init__(self, channel, reduction=16):

- super(CALayer, self).__init__()

- self.avg_pool = nn.AdaptiveAvgPool2d(1)

- self.c1 = BasicBlock(channel, channel // reduction, 1, 1, 0)

- self.c2 = BasicBlockSig(channel // reduction, channel, 1, 1, 0)

- def forward(self, x):

- y = self.avg_pool(x)

- y1 = self.c1(y)

- y2 = self.c2(y1)

- return x * y2

- class Block(nn.Module):

- def __init__(self, in_channels, out_channels, group=1):

- super(Block, self).__init__()

- self.r1 = Merge_Run_dual(in_channels, out_channels)

- self.r2 = ResidualBlock(in_channels, out_channels)

- self.r3 = EResidualBlock(in_channels, out_channels)

- # self.g = ops.BasicBlock(in_channels, out_channels, 1, 1, 0)

- self.ca = CALayer(in_channels)

- def forward(self, x):

- r1 = self.r1(x)

- r2 = self.r2(r1)

- r3 = self.r3(r2)

- # g = self.g(r3)

- out = self.ca(r3)

- return out

- class RIDNET(nn.Module):

- def __init__(self, args):

- super(RIDNET, self).__init__()

- n_feats = 16

- kernel_size = 3

- rgb_range = 255

- mean = (0.4488, 0.4371, 0.4040)

- std = (1.0, 1.0, 1.0)

- self.sub_mean = MeanShift(rgb_range, mean, std)

- self.add_mean = MeanShift(rgb_range, mean, std, 1)

- self.head = BasicBlock(3, n_feats, kernel_size, 1, 1)

- self.b1 = Block(n_feats, n_feats)

- self.b2 = Block(n_feats, n_feats)

- self.b3 = Block(n_feats, n_feats)

- self.b4 = Block(n_feats, n_feats)

- self.tail = nn.Conv2d(n_feats, 3, kernel_size, 1, 1, 1)

- def forward(self, x):

- s = self.sub_mean(x)

- h = self.head(s)

- # b1 = self.b1(h)

- # b2 = self.b2(b1)

- # b3 = self.b3(b2)

- b_out = self.b4(h)

- res = self.tail(b_out)

- out = self.add_mean(res)

- f_out = out + x

- return f_out

- if __name__ == "__main__":

- # Generating Sample image

- image_size = (1, 3, 640, 640)

- image = torch.rand(*image_size)

- # Model

- model = RIDNET(3)

- out = model(image)

- print(out.size())

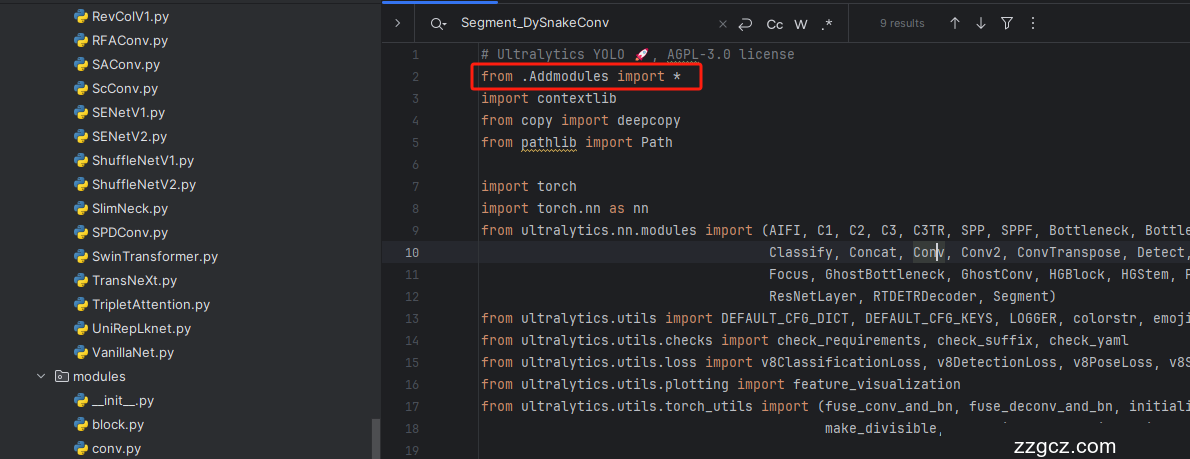

四、手把手教你添加RIDNet

4.1 修改一

第一还是建立文件,我们找到如下 ultralytics /nn文件夹下建立一个目录名字呢就是'Addmodules'文件夹( 用群内的文件的话已经有了无需新建) !然后在其内部建立一个新的py文件将核心代码复制粘贴进去即可。

4.2 修改二

第二步我们在该目录下创建一个新的py文件名字为'__init__.py'( 用群内的文件的话已经有了无需新建) ,然后在其内部导入我们的检测头如下图所示。

4.3 修改三

第三步我门中到如下文件'ultralytics/nn/tasks.py'进行导入和注册我们的模块( 用群内的文件的话已经有了无需重新导入直接开始第四步即可) !

从今天开始以后的教程就都统一成这个样子了,因为我默认大家用了我群内的文件来进行修改!!

4.4 修改四

按照我的添加在parse_model里添加即可。

到此就修改完成了,大家可以复制下面的yaml文件运行。

五、RIDNet 的yaml文件和运行记录

5.1 RIDNet 的yaml文件

此版本训练信息:YOLO11-RIDNet summary: 475 layers, 2,685,742 parameters, 2,685,726 gradients, 26.2 GFLOPs

- # Ultralytics YOLO 🚀, AGPL-3.0 license

- # YOLO11 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect

- # Parameters

- nc: 80 # number of classes

- scales: # model compound scaling constants, i.e. 'model=yolo11n.yaml' will call yolo11.yaml with scale 'n'

- # [depth, width, max_channels]

- n: [0.50, 0.25, 1024] # summary: 319 layers, 2624080 parameters, 2624064 gradients, 6.6 GFLOPs

- s: [0.50, 0.50, 1024] # summary: 319 layers, 9458752 parameters, 9458736 gradients, 21.7 GFLOPs

- m: [0.50, 1.00, 512] # summary: 409 layers, 20114688 parameters, 20114672 gradients, 68.5 GFLOPs

- l: [1.00, 1.00, 512] # summary: 631 layers, 25372160 parameters, 25372144 gradients, 87.6 GFLOPs

- x: [1.00, 1.50, 512] # summary: 631 layers, 56966176 parameters, 56966160 gradients, 196.0 GFLOPs

- # YOLO11n backbone

- backbone:

- # [from, repeats, module, args]

- - [-1, 1, RIDNET, []] # 0-P1/2

- - [-1, 1, Conv, [64, 3, 2]] # 1-P1/2

- - [-1, 1, Conv, [128, 3, 2]] # 2-P2/4

- - [-1, 2, C3k2, [256, False, 0.25]]

- - [-1, 1, Conv, [256, 3, 2]] # 4-P3/8

- - [-1, 2, C3k2, [512, False, 0.25]]

- - [-1, 1, Conv, [512, 3, 2]] # 6-P4/16

- - [-1, 2, C3k2, [512, True]]

- - [-1, 1, Conv, [1024, 3, 2]] # 8-P5/32

- - [-1, 2, C3k2, [1024, True]]

- - [-1, 1, SPPF, [1024, 5]] # 10

- - [-1, 2, C2PSA, [1024]] # 11

- # YOLO11n head

- head:

- - [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- - [[-1, 7], 1, Concat, [1]] # cat backbone P4

- - [-1, 2, C3k2, [512, False]] # 14

- - [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- - [[-1, 5], 1, Concat, [1]] # cat backbone P3

- - [-1, 2, C3k2, [256, False]] # 17 (P3/8-small)

- - [-1, 1, Conv, [256, 3, 2]]

- - [[-1, 14], 1, Concat, [1]] # cat head P4

- - [-1, 2, C3k2, [512, False]] # 20 (P4/16-medium)

- - [-1, 1, Conv, [512, 3, 2]]

- - [[-1, 11], 1, Concat, [1]] # cat head P5

- - [-1, 2, C3k2, [1024, True]] # 23 (P5/32-large)

- - [[17, 20, 23], 1, Detect, [nc]] # Detect(P3, P4, P5)

5.2 训练代码

大家可以创建一个py文件将我给的代码复制粘贴进去,配置好自己的文件路径即可运行。

- import warnings

- warnings.filterwarnings('ignore')

- from ultralytics import YOLO

- if __name__ == '__main__':

- model = YOLO('ultralytics/cfg/models/v8/yolov8-C2f-FasterBlock.yaml')

- # model.load('yolov8n.pt') # loading pretrain weights

- model.train(data=r'替换数据集yaml文件地址',

- # 如果大家任务是其它的'ultralytics/cfg/default.yaml'找到这里修改task可以改成detect, segment, classify, pose

- cache=False,

- imgsz=640,

- epochs=150,

- single_cls=False, # 是否是单类别检测

- batch=4,

- close_mosaic=10,

- workers=0,

- device='0',

- optimizer='SGD', # using SGD

- # resume='', # 如过想续训就设置last.pt的地址

- amp=False, # 如果出现训练损失为Nan可以关闭amp

- project='runs/train',

- name='exp',

- )

5.3 RIDNet 的训练过程截图

五、本文总结

到此本文的正式分享内容就结束了,在这里给大家推荐我的YOLOv11改进有效涨点专栏,本专栏目前为新开的平均质量分98分,后期我会根据各种最新的前沿顶会进行论文复现,也会对一些老的改进机制进行补充,如果大家觉得本文帮助到你了,订阅本专栏,关注后续更多的更新~